Smile: Your computer is watching.

Affectiva, a three-year-old startup that grew out of MIT’s Media Lab, is teaching machines to understand what you feel.

Affectiva has developed a way for computers to recognize human emotions based on facial cues or physiological responses, and then use that emotion-recognition technology to help brands improve their advertising and marketing messages.

For example, Affectiva might train a webcam on users while they watch ads, tracking their smirks, smiles, frowns and furrows to measure their levels of surprise, amusement or confusion throughout a commercial and compare them to other viewers across different demographics. Affectiva also makes a wearable biosensor that can monitor the user's emotional state via her skin.

For HuffPost Tech's "Life as..." series, Affectiva’s co-founder and chief technology officer Rana El Kaliouby offered a glimpse at what happens when machines know we're amused and the future of computers with a sense for feelings.

Affectiva’s technology allows brands to not only listen in on what we say about what we’re feeling, but to actually see for themselves what we're feeling. Which industries are interested in applying Affectiva’s emotion-recognition technology to their work?

The closest use case is measuring responses to media -- whether you’re watching an advertisement, movie trailer, movie, TV show or online video. If an ad is supposed to be funny, but we look at 100 participants and none laugh, then we know it’s not really effective. The idea is to enable media creators to optimize their content.

Political polling is another big area for us -- measuring people’s responses to a political debate. There are applications in games, in all things social, and in health, too. We can read your heart rate from a webcam without you wearing anything -- we can just use the reflection of your face, which shows blood flow. Imagine having a camera on all the time monitoring your heart rate so that it can tell you if something’s wrong, if you need to get more fit, or if you’re furrowing your brow all the time and you need to relax.

What have you learned about people that you wouldn’t have known without Affectiva’s emotion-recognition technology?

In some cultures, like Middle Eastern, Egyptian or Asian cultures, people are often hesitant to give any negative feedback. There was an ad in India for body lotion, and there was one particular scene where a husband is being playful with his wife, whose tummy is showing, and he touches her tummy. We recorded women watching the video, then asked them whether they liked the ad.

Some didn’t bring up the scene at all, and others said the ad was really offensive -– “How could you do that?” and so forth. But when we looked at the data, for 100 percent of the women, there was always an “enjoyment” smile when they watched that scene. They clearly enjoyed it.

I can imagine politicians using Affectiva to tailor their ads to ensure potential voters feel a certain way when they see them -- overjoyed, for example -- and another way when they see their opponent -- say, terrified. How do you envision Affectiva being used in campaigns?

We measured about 100 people’s responses to the third Obama/Romney debate. In the third debate, Obama did especially well, and we found that both Democrats and Republicans responded very positively to Obama. He cracked a number of jokes and people universally found those funny. Imagine if this feedback had been given in real time to the politicians and they’d used it to optimize their messaging. I do see that happening.

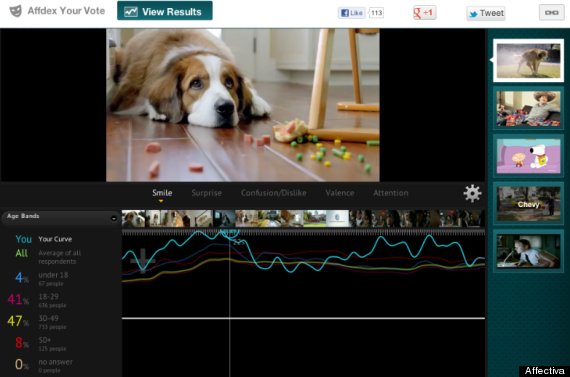

Affectiva's "Emote Your Vote" feature uses the company's Affdex technology to gauge individuals' emotional reactions to Super Bowl ads. In the picture here, the points at which the author smiled during the ad (shown bright turquoise) are contrasted with other viewers' smile activity (graphed below in green, yellow, red and magenta).

Affectiva's "Emote Your Vote" feature uses the company's Affdex technology to gauge individuals' emotional reactions to Super Bowl ads. In the picture here, the points at which the author smiled during the ad (shown bright turquoise) are contrasted with other viewers' smile activity (graphed below in green, yellow, red and magenta).

You mentioned there might be social applications of the technology. Hypothetically, could Facebook figure out what it should show me to make me feel a certain way -- i.e. that certain people’s profiles make me happy, so I should see their profiles when I’m feeling sad?

It could show you not just happy profiles, but also offer affect-based recommendations for things to do or people to talk to or things to watch. Or games can even adapt to your emotional experience, or your emotional state. There’s definitely a lot of stuff that can be done once you figure out that person’s moods.

If we’re all watching a YouTube video, it would be really cool if you could get a sense for how that YouTube video affected people’s emotional states. Say it makes you happy and you laugh your head off. We’d also know a million other people who saw that video and at that same scene also laughed. It’s very intriguing to be able to take something very human, like an expression of emotion, and share that globally.

Could companies that use Affectiva technology also manipulate our emotions to make us feel a certain way, so we’re more inclined to consume their product? For example, if Facebook knows I spend more time on the site when I’m anxious, might it try to make me anxious by showing me more content that unsettles me?

With every technology there will be misuses of it. At Affectiva, I believe we have a role to educate people about what can and can’t be done with the technology. I don’t think its super easy to manipulate people’s emotions in that way.

Although places like casinos already do try to manipulate our emotions, right? It seems like Affectiva might allow people more granular control, in real-time, to put people into the right frame of mind to shop or spend.

People do that anyhow. If you walk into Best Buy or Target, they spend a lot of effort trying to make this a place where you’d want to spend more time. All this stuff hasn’t yet been optimized for a digital experience. And I think there is an opportunity to do that and do it in a way that respects people’s emotional states.

You noted Affectiva is “big on opt-in” and believes people should “always be in control of what they share or do not share.” Still, reading our emotions marks a new level of intimacy with brands online. How does this change our expectation of privacy?

I think at some point somebody is going to do something that’s not opt-in, but so far everyone we’ve worked with, we’ve insisted they do it so that it’s opt-in. And sometimes we’ve pushed back. When we first started Affectiva, some of our early customers said, “We don’t want to tell people they’re being recorded because either they’ll opt out or it’ll affect their experiences.” We’re always adamant that they tell people up front and people have to sign a consent from.

Does using Affectiva change how people express their emotions?

Early on in our research, we thought having the camera on would make people more aware of it and so they’d be less emotive or artificially more emotive. But we found that very quickly people forgot they had the cameras on. We’ve had people in their bedrooms -- they clearly forget the camera’s on.

Where might we encounter Affectiva’s technology in the future?

Anywhere from laptops to mobile phones. There’s a large percentage of mobile phones that now have a camera that’s with you a lot of the time, and there’s a lot of interest around those cameras as a data collection mechanism. And cars: we’ve done a number of projects with various car manufacturers looking at drowsy driving, distracted driving, and how to measure when people are getting angry, frustrated or bothered. There’s a lot of interest from the car people.

You can also tie emotional response with location. Think of Foursquare, but you don’t know just know where a person is, but how she’s feeling.

This interview has been edited and condensed for clarity.

CORRECTION: A previous version of this story incorrectly stated that Affectiva was a two year-old startup. The company, founded in December 12, is three years old.