Kaur Films is all about IMAGINATION.

Imagine a gender equitable society. Where women are not the stereotypical nurturers and men are not the conventional providers. Where the roles that people play in the society are aligned with their acumen and interests rather than a gender driven portrayal of a deeply entrenched parochial viewpoint.

Such a society does not exist yet. But imagining it is a worthwhile exercise. It was only a few years ago that Malala Yousafzai was almost killed for wanting an education for herself. Her story is not unique. It has taken hundreds of years of recent history for women to to establish a mainstream voice. For perspective, that is half the world’s population.

Admittedly, some countries and societies are far advanced than others. Yet, the underlying deep bias continues even in the most progressive of environments. This makes me wonder if a solution lies in reimagining social norms which can be driven by outcomes rather than inherent human biases.

As a thought experiment, consider using Artificial Intelligence in our quest to break out of thousands of years of deep rooted societal bias. Can today’s technology create a mechanism for correcting stereotypical habit patterns using deep learning and data driven techniques?

Deep-learning software attempts to mimic human brain activity in the neocortex, where thinking occurs. The software learns to recognize patterns in digital representations of sounds, images, and other data. Ray Kurzweil wrote a definitive book “How to Create a Mind” through software techniques. The goal of deep learning is to to recreate human intelligence at a machine level, hence, Artificial Intelligence. However, the outcome of such learning is predicated on how well the software is trained. Biased trainings will result in biased outcomes as Microsoft found out with its AI chatbot Tay.

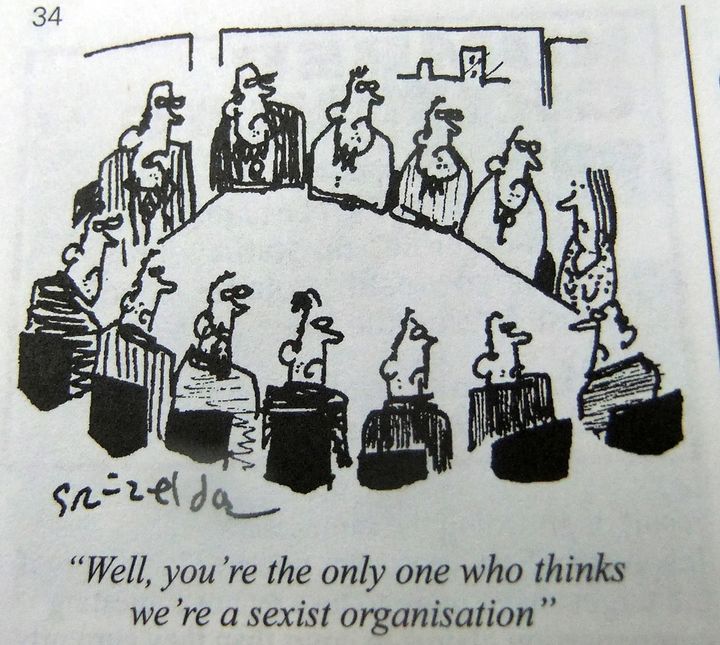

Continuing on our thought experiment, let’s look at a simple workplace situation where more often than not, regular human intelligence fails us.

Situation: A sexist comment in the boardroom.

Desired Outcome: Raise a hand and nip the comment.

In this simple example, the desired outcome is heavily dependent on two major assumptions (1) having a woman in the boardroom and (2) a culture where she can freely express her point of view.

If this situation were handled by a robot using AI techniques, it is imperative for the robot to be trained on the underlying assumptions. Poor training results in poor outcomes. When the robot receives a sexist input, it needs to understand the context, apply a perspective and then act accordingly. Robots programmed without a woman’s perspective will be inherently biased and fail to deliver the desired outcome. Indeed this is what happened with Tay.

Tech company environments, where AI algorithms are trained and tested suffer from an underrepresentation of women and thus lack an awareness of such biases. Only 16% of computer graduates are women. Add to that deeply ingrained cultural and workplace attitudes, which are often subversive to women, and one can see how quickly gender and cultural stereotypes can be perpetuated.

Fortunately, unlike human biases, AI biases are easier to correct simply by reprogramming the robot’s software. In order to receive an output devoid of bias, the actions in creating that output ought to be be categorized with parity and equity. It is just a matter of how we train and test our data and algorithms, redoubling our commitment to such parity. It is not hard to imagine a gender and culture neutralizing framework that processes data and corrects a robot’s behavior, mimicking ideal human language and perceptions.

It is exciting to imagine the possibilities of such equality. In the not so distant future, AI will be an irrefutable part of our lives invading diverse fields such as heathcare, behavioral economics, politics etc. And in order to make it a real success, we will need people from all walks of life, not only programmers, but also professionals engaged in healthcare, anthropology, economics, finance etc.

I feel encouraged that men and women are recognizing the potential of technology in creating a world that is free from biases. Next week, I look forward to discussing this and more at the Women in STEM Conference and Awards, which brings together over 100 accomplished women and men to discuss the future of science and tech in San Francisco. Just as Malala championed the cause of equal education opportunities for girls, there will be many advocates for creating a new paradigm for a gender-neutral society. See you there.