Is the complexity of Google’s search ranking algorithms increasing or decreasing over time? originally appeared on Quora - the place to gain and share knowledge, empowering people to learn from others and better understand the world.

Answer by Nikhil Dandekar, former engineer/manager on Bing Search (2007-2013), on Quora:

I have worked on Bing search ranking and at other search engines, and have friends working on Google search. Given what I know, here is how I expect the complexity of Google’s search ranking algorithms to have changed over time.

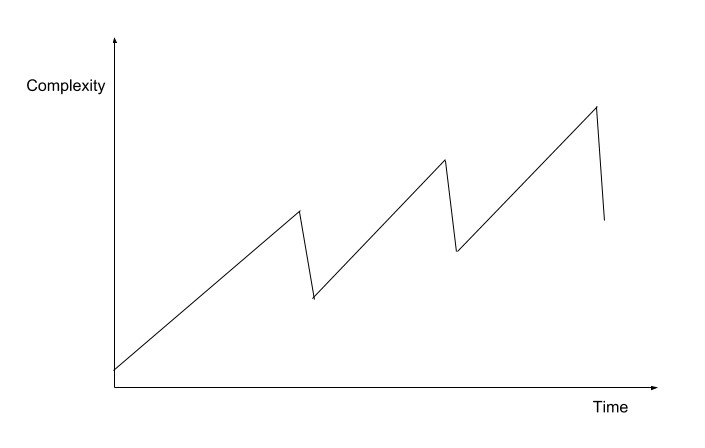

The diagram shows long periods of time where the complexity keeps on increasing as you make small incremental improvements to the ranking algorithms. These long periods of increasing complexity are interspersed by periods of sudden reduction in complexity brought about by replacing complex heuristics by machine learning and other simplifications.

This diagram is obviously an oversimplification, but I hope it gets the main point across. Let’s dive into the details of why this happens.

Let’s start with what we know about Google search ranking publicly:

- Google search ranking is a fairly complex beast. There are thousands of features that influence ranking, and quite a few of them are complex enough that they are best learned using their own machine learning algorithms to calculate them (E.g. search query embeddings).

- Traditionally, Google has resisted using machine learning for their core search ranking algorithm.[1]

- But more recently, there has been a shift towards using more machine learning in search, especially some of the stuff that the Google Brain team has built.[2] [3]

Given this, I expect the current Google search to be a combination of heuristics, that are used at some places, and machine learning, that is used at other places. It’s still unclear whether the top level ranking function is machine learned or heuristic. My personal guess is that it’s still a heuristic function that combines a few machine learned and a few heuristic scores.

Here is a rule of thumb for the complexity of ML systems that is generally agreed upon by ML practitioners:

- A simple, intuitive heuristic is less complex to understand and maintain than a Machine Learned system.

- However, a well-designed ML system is less complex than a complex heuristic.

These closely match rules 1 and 3 from the rules of ML put forward by Google’s own Martin Zinkevich. For the complexity of search ranking specifically, read my answer on the complexity of heuristics vs. ML ranking here: What are the pros and cons of using a machine learned vs. heuristic / score-based model for search ranking?

Given this, I expect the arc of complexity for the Google search ranking algorithms to have adopted a sawtooth pattern as shown above:

- You start off with some heuristics for ranking. Over time these heuristics get more complex, since you need to encode more rules in your search engine to make it better.

- At some point, the system becomes complex enough that you need to take a step back and work on reducing the complexity of the system. You might do this by moving some of the heuristics to ML systems, or do other things like feature ablation or consolidation of existing ML systems. Usually these are deliberate efforts that lead to a sudden decrease in complexity of the system.

- Once your system reaches the planned lower complexity state, you repeat steps 1 & 2 over and over. Given that the search engine needs to get more relevant for a wider range of queries over time, the overall complexity trend of the system, in spite of these “sawtooths” will still be slightly positive. Depending on how good Google is at this, they will try to keep the slope of this long term trend to a minimum.

Footnotes

[1] Edmond Lau's answer to Why is machine learning used heavily for Google's ad ranking and less for their search ranking?

This question originally appeared on Quora - the place to gain and share knowledge, empowering people to learn from others and better understand the world. You can follow Quora on Twitter, Facebook, and Google+. More questions: