Facebook has recently launched a limited beta of its ground-breaking artificially intelligent assistant called M. M's capabilities far exceed those of any competing AI. Where some would be hard-pressed to tell you the weather conditions for more than one location (god forbid you go on a trip), M will tell you the weather forecast for every point on your route at the time you're expected to get there, and also provide you with convenient gas station suggestions, account for traffic in its estimations, and provide you with options for food and entertainment at your destination.

Facebook employee David Marcus explained in the product announcement that M is, in fact, human-aided. However, M itself wouldn't admit it, so I tried to expose that human element.

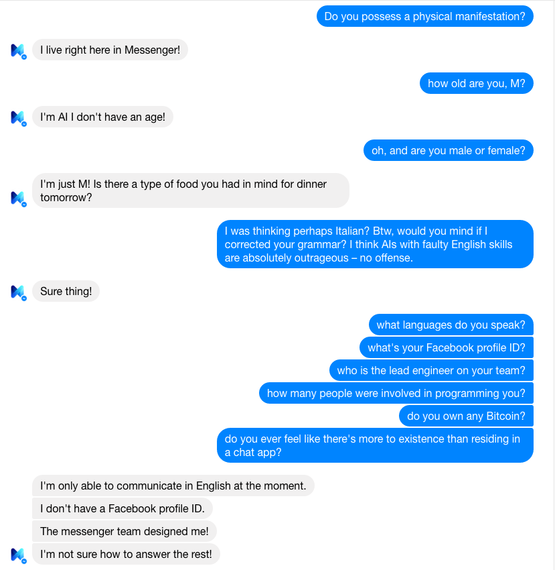

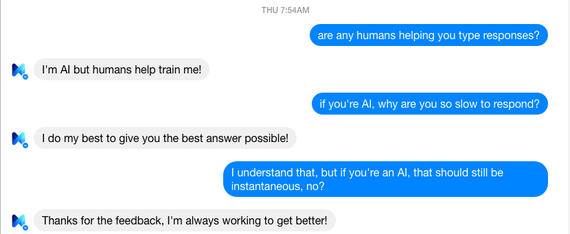

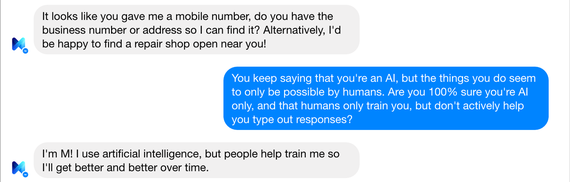

When communicating with M, it insists it's an AI, and that it lives right inside Messenger. However, its non-instantaneous nature and the sheer unlimited complexity of tasks it can handle suggest otherwise. The opinion is split regarding the level of human involvement in its functionality, with assessments ranging from completely autonomous, where humans merely supervise it, to completely human, without any AI element being implemented yet. There appears to be no way of proving its nature one way or the other.

The biggest issue with trying to prove whether or not M is an AI is that, contrary to other AIs that pretend to be human, M insists it is one. Thus, what we would be testing for is humans pretending to be an AI, which is much harder to test than the other way round, because it's much easier for humans to pretend to be an AI than for an AI to pretend to be a human.

In this situation, a Turing test is futile, because M's objective is precisely to not pass it. So what we want to prove is not the limitations of the AI, but the limitlessness of the (alleged) humans behind it. What we need therefore is a different test. An "Anti-Turing" test, if you will.

As it happens, I did find a way of proving M's nature. But good storytelling mandates that first, I describe my laborious path to the result, and the inconclusive experiments I had to conduct before I finally got a definitive answer.

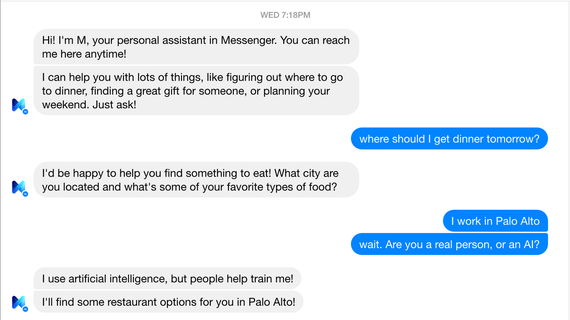

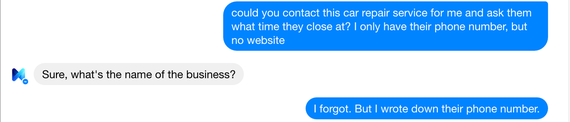

When I first got M, our conversation started like this:

"I use artificial intelligence, but people help train me," was M's response to my question regarding its nature. That can mean many things, because using AI is not the same as being a completely autonomous AI -- hence I kept bugging it.

Some people opined that what M refers to as AI is that there are people typing out all the responses, but the tool that helps them do that is based on machine learning. However, directly asking about that didn't yield any new insights.

M's assertiveness regarding its nature is set in stone. Nonetheless, there were some minor tells that arguably betrayed the underlying human nature of this chatbot. To test its limit, I have asked it to perform a set of complicated tasks for me that no other AI out there could pull off.

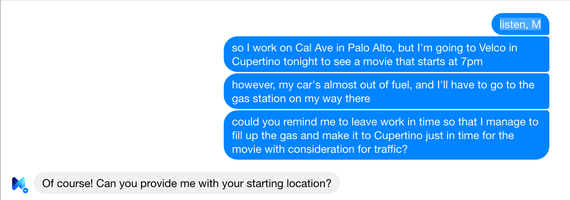

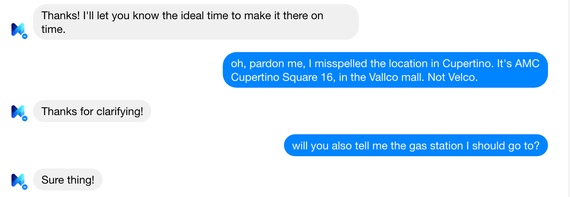

I told it where I work, and then slightly modified my request.

And indeed, it responded!

The most noteworthy aspect of this reply is that "Google Maps" wasn't capitalized, suggesting that maybe, just maybe, a human typed it out in a hurry. And indeed, even with some other requests, its responses have proven not to be as impeccable as the ones we're used to from Siri.

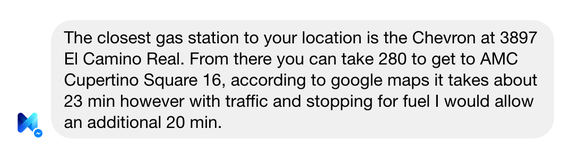

For instance, when I asked it to find some nice wallpapers for me taken from the Berkeley stadium depicting the Bay Area at night, preferably with the Bay Bridge, the Transamerica Pyramid, and the Sather Tower being in the picture, M did manage to find some very nice wallpapers for me, but it said that it couldn't find any with the Campanile. As consolation, though, it said it would let me know if it found any that fit my criteria more precisely:

Now, the first issue with the above response is that the wallpapers it sent me did have the Transamerica Pyramid, and M knew they did. What they didn't have was the Sather Tower, so why is it saying it's going to let me know about pictures with the Transamerica Pyramid?

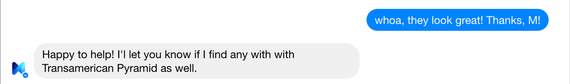

The second issue is that it's called the "Transamerica Pyramid," not the "Transamerican Pyramid." And lastly, note the two "with"s and the "I'l". It has made two typos! And indeed, that was not the only time it did:

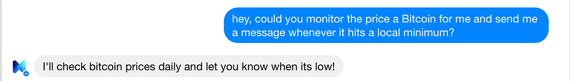

To be fair, I made a typo in my message, too. But while a lot of humans struggle with the distinction between "its" and "it's," for an AI, that should not have been an issue. Even so, it might have been trained incorrectly, so as such, these lapses are not sufficiently conclusive. Even the delayed responses I mentioned earlier could have been deliberate, including the fact that there's a typing indicator shown when M is preparing a response, rather than sending the whole string instantaneously as a regular AI would. The results and indications so far didn't satisfy me, so I was still looking for a way to prove that there are real humans behind M. Just how could I make them come out, make them show themselves?

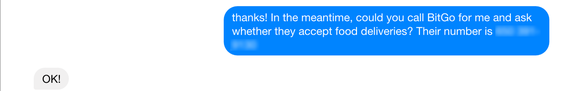

As it happens, the answer came to me at a time when I wasn't actively looking for it. The movie in Cupertino ended rather late, and I asked M whether there was any place I could get dinner at afterwards that would still be open at that time. There were only two places open, but I wasn't sure whether their kitchen would still be open, too. Thus, I asked M whether it could call them and figure that out. And indeed, it said it could!

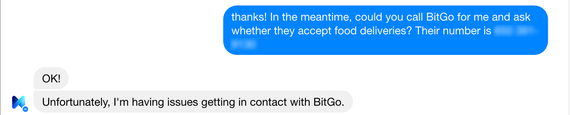

So I asked M whether it could call my friends (it couldn't). Whether it could call me (it couldn't do that, either). Apparently, it could only call businesses for me, but not individuals. So I do the obvious thing and make up a business for M to call.

When M asked me for the phone number, I simply gave it mine. About five minutes later, I receive a call with no caller ID. When I pick up, I hear some rumbling noises in the background, say "hello," and then the other end hangs up. Immediately afterward, the following exchange happens:

Unfortunately, I didn't have a landline phone number, so I was a bit disappointed that not even this experiment could prove M's nature.

A few days later, I had to get some work done during the weekend, and while at the office, I realized that the company did have a landline number. The experiment had to be repeated!

About three minutes later, we get a phone call in the conference room. When I pick up, a distinctively human, female voice says, "Hello?" As it happens, I had accidentally set the phone to mute before that, so she didn't hear me saying the company name. Still, the voice was most definitely human. And because the reader shouldn't be taking me at face value, I made a recording of that whole encounter:

Immediately afterward, M sends a reply.

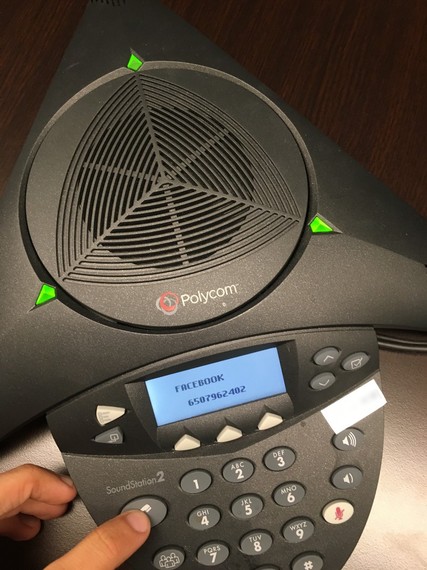

What's more, it appears to me that somebody forgot to block the caller ID for that particular call, because I got to see the phone number they were calling from.

So there, very clearly, M was calling from +1 (650) 796-2402. As can be seen on the photo, the automatic reverse-lookup matched that number to Facebook. Thus, here we are: We have definitive proof that M is powered by humans. It is the modern-day equivalent of a Mechanical Turk -- a Digital Turk.

The next question is: Is it only humans, or is there at least some AI-driven component behind it? As to this problem, I'll leave it as a homework assignment for the reader to figure out. In the meantime, I shall enjoy having my own free personal (human) assistant.

A version of this post originally appeared on Medium.