Facebook has taken another small step forward in its battle to limit the proliferation of fake news on the social network.

On Wednesday, the company announced that it would begin rolling out a redesigned “trending” module that ― at least in theory ― should expose users to more reliably sourced content.

That redesign consists of three changes:

Trending stories will no longer be tailored to individual users. Instead, everyone in the same region will see the same stories.

A new algorithm will help Facebook figure out what’s trending in the first place.

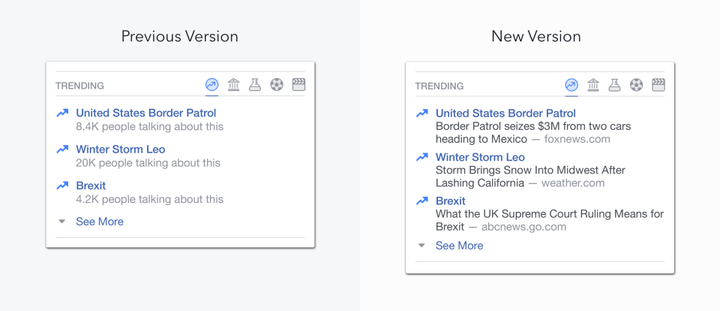

Facebook will be adding more information to the trending module itself, including specific publisher headlines.

The new algorithm ― which, of the three changes, appears to be the most effective in countering fake news ― also seems to be the one Facebook is least eager to talk about.

We do know that, as of Wednesday, multiple publishers have to post articles about a topic at the same time for it to trend on the site. This should limit the likelihood of one (probably fake) story exploding in popularity. Articles that prompt higher user engagement will also be rewarded with additional weight.

“Trending uses a variety of signals from [the] news feed ― including when people report news as fake or spam ― to help prevent fake news, hoaxes or spam from appearing in trending,” said Will Cathcart, a vice president of product management at Facebook.

“Today’s announced policy changes are at best a marginal improvement ... these half-measures will not stop the rampant lies spreading on the platform.”

- Media Matters President Angelo Carusone

Though Facebook’s trending redesign is undoubtedly a step in the right direction, critics say it doesn’t do nearly enough ― especially compared to competitors like Snapchat, which have taken far more aggressive action.

“Today’s announced policy changes are at best a marginal improvement,” said Media Matters President Angelo Carusone in a statement to The Huffington Post. “While moving in the right direction, these half-measures will not stop the rampant lies spreading on the platform. We can’t forget that Facebook made the problem of fake news significantly worse when they acted on right-wing misinformation and fired all their human editors over the summer and let their algorithms get gamed.”

Carusone was referring to what happened last spring, when conservative figures accused Facebook of suppressing news favorable to them. An internal investigation found no evidence to support the claim, but the company nevertheless fired all its editors and left an algorithm in charge of sorting.

This latest tweak also comes on the heels of a torturously long process the company unveiled in November to flag fake news as “disputed.” Critics say it really only helps Facebook distance itself from taking any responsibility.

“The Facebook fake news vetting plan is both unobjectionable — who has a problem with fact-checking? — and unintentionally hilarious: Facebook, run and staffed by some of the world’s most clever people, who have created one of the world’s most powerful companies, can’t figure out if Hillary Clinton is running a child sex ring out of a pizza parlor. So it’s going to outsource that question to someone else.”