Starting on Wednesday, Facebook is rolling out a new feature for suicide prevention.

The social media site is partnering with Now Matters Now, the National Suicide Prevention Lifeline, Save.org and Forefront: Innovations in Suicide Prevention, a nonprofit operating out of the University of Washington's School of Social Work, to give users more options when they see a friend post something that is concerning. It works on both desktop and mobile.

If a Facebook friend posts something that you feel indicates he or she could be thinking about self harm, you'll be able to click the little arrow at the top right of the post and click "Report Post." There, you'll be given the options to contact the friend who made the post, contact another friend for support or contact a suicide helpline, the University of Washington reported on Wednesday.

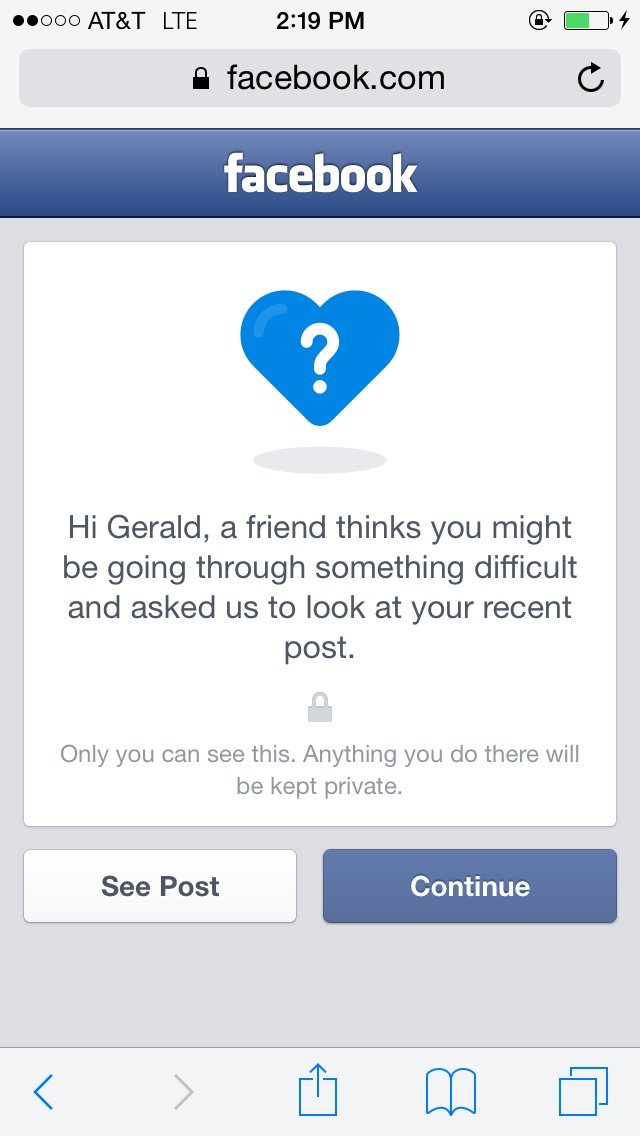

After that, Facebook will look at the post. If Facebook feels like the post indicates distress, it will contact the person who posted it. That person will be greeted with the following pop ups when he or she next logs in:

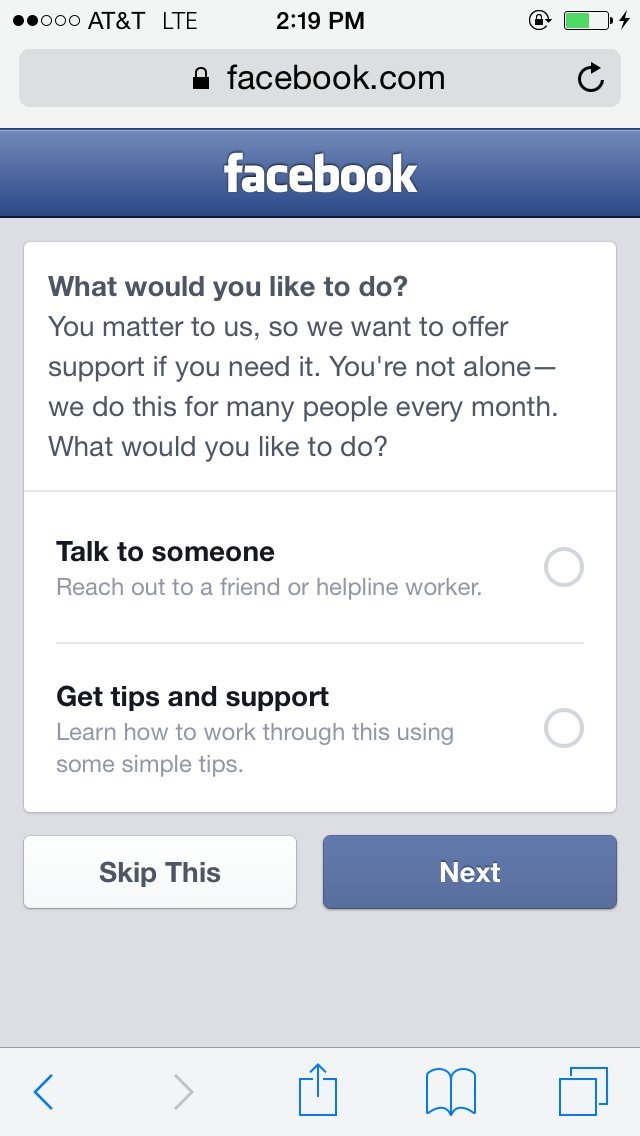

Then they'll see options to reach out to a friend or get tips and support.

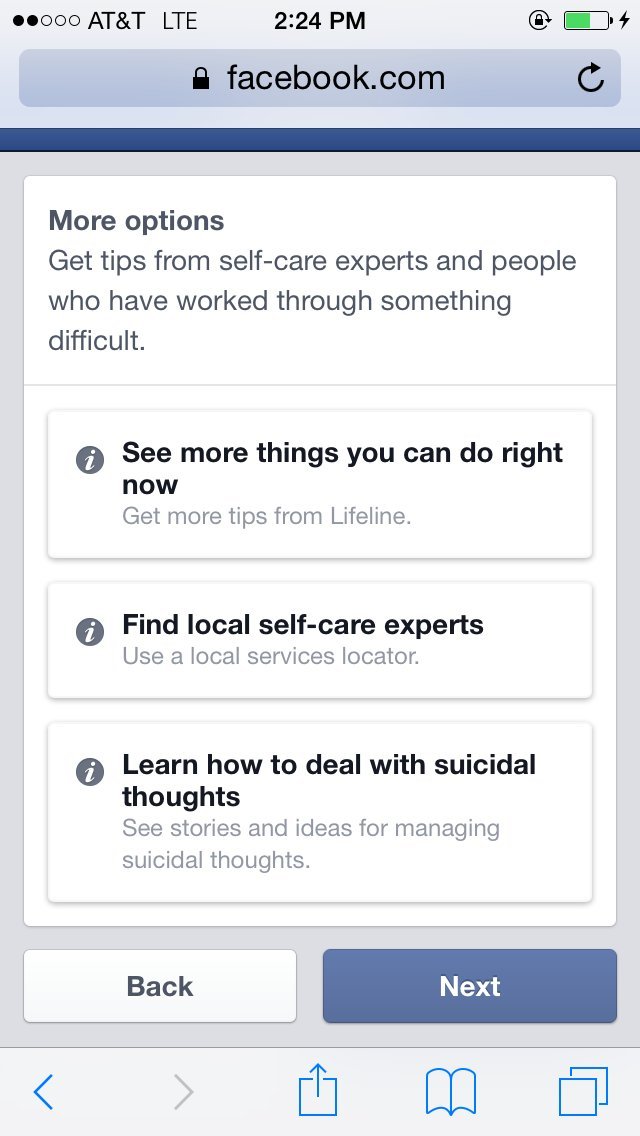

If he person decides they'd like to talk to someone, they'll be prompted to call a friend, send a friend a Facebook message or contact a suicide helpline. They can either call or message a suicide prevention expert. Facebook also provides videos that use true stories of people who have dealt with suicidal thoughts.

There's also a section that recommends simple relaxation techniques like baking, drawing, going for a walk or visiting a library.

Facebook will even help someone find a self-care expert.

Facebook has had a way to report potentially suicidal content since 2011, but this is the first time this support will be built directly into posts. Until now, you had to seek out Facebook's suicide prevention page and upload a screenshot or URL of the post.

The new reporting feature is currently available for 50 percent of Facebook users in the U.S. and will roll out to the rest of the country in the next few months, a spokesperson for Facebook told The Huffington Post in a phone interview on Wednesday.

"We have teams working around the world, 24/7, who review any report that comes in," Rob Boyle, Facebook Product Manager and Nicole Staubli, Facebook Community Operations Safety Specialist, wrote in a post for Facebook Safety on Wednesday. "They prioritize the most serious reports, like self-injury, and send help and resources to those in distress."

Forefront FB v8 from Mimi Gan on Vimeo.