The hype about Artificial Intelligence is all about the algorithms. Deep Mind, the Google company that is leading the world in machine learning, recently published an article where it described how AlphaGo Zero managed to become - all by itself and from scratch - a master in Go and beat all previous versions of itself, using an advanced from of reinforcement learning algorithms. But while companies and organizations elbow each other in their quest for top talent in algorithmic design and data science, the real news are coming not from the worlds of bits but from the world of wires, silicon and electronics : hardware is back!

The flattening of Moore's Law

First, a quick historical journey: in 1958 the first integrated circuit contained 2 transistors and was quite sizable, covering one square centimeter. By 1971 "Moore's Law" had become evident in the exponential increase of performance of integrated chips; 2,300 transistors packed in the same surface as before. By 2014 the IBM P8 processor had more tan 4.2 billion transistors and 16 cores all packed at 650 square mm. Alas, there is a natural limit on how many transistors you can pack in a given piece of silicon, and we are reaching this limit soon. Moreover, machine learning applications, particularly in pattern recognition (e.g. understanding speech, images. etc.) require massive parallel processing. When Google announced that its algorithms were able to recognize images of cats, what they failed to mention was that its software needed 16,000 processors to run in order to do so. That's not too much of an issue when you can run your algorithms on a server farm in the cloud, but what if you had to run them on a tiny mobile device? This is increasingly becoming a major industry need. Running advanced machine learning capabilities at the end-point confers huge advantages to users, and solves for many data privacy issues as well. Imagine if Siri, for example, did not need to make cloud calls but was able to process all data and algorithms on the hardware of your smart phone. But if you thought that your smartphone heats up too much during a few minutes conversation, or after playing Minecraft, wait till it Siri becomes truly personalised by running on your phone too.

Solving for the bottleneck

The reason why devices heat up, and the main problem with our current computer hardware designs, is the so called "von Neumann bottleneck”: classic computer architectures separate the processing from the data storage, which means that data need to be transferred back and forth from one place to the other overtime a calculation takes place. Parallelism solves part of this problem by breaking down calculations and distributing processing, but you still need to move data at the end, to reconcile everything into a desired output. So what if there was a way to get rid of the hardware bottleneck altogether? What if processing and data resided in the same place and nothing had to be moved around and produce heat, and consume so much energy? After all, that is how our brains work: we do not have separate areas for processing and data storage as computers do; everything is happening at our neurons.

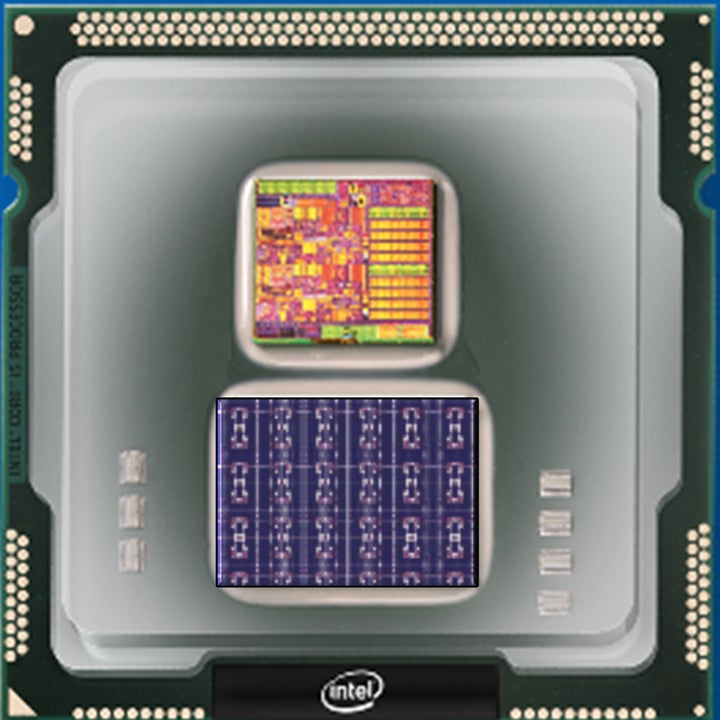

Intel’s Loihi neurotrophic chip.

Getting inspired by the way our brain functions is not new in Artificial Intelligence - we are already doing that in deep learning using neural networks. However, we do so by emulating the functioning of neurons using machine learning algorithms and parallel processing. But what if instead of emulation we had computers that functioned just like our brains do? Since the 1970s people have envisioned such a factual mapping of brain functionalities onto hardware, in other words hardware that directly mapped brain architecture. This approach, called "neuromorphic computing", is finally becoming commercialised with companies such as Intel and Qualcomm recently announcing releases of neuromorphic chips for commercial use.

Neuromorphic chips can be used for AI applications at the end point, which is very exciting news indeed. Nevertheless, they also have the potential to advance machine intelligence to a whole new level. By using electronics, instead of software, to develop machine cognition we may be able to achieve the dream of general artificial intelligence and create truly intelligent systems.

Quantum: the big bang of computing

But the true big bang in computing will come not from neuromorphic chips (which may end up having only niche applications despite the big promise), but from harnessing quantum physics. As the appetite for fast computing is increasing our ambitions for solving really hard problems increase too. What if we could compute the optimal way of arranging a series of molecules in order to develop a cure for cancer? This question is in effect a reduction of all cancer research so far, currently undertaken by trial-and-error. Classical computing is unable to solve such problems where the combination of parameters simply explodes after a couple of iterations. Quantum computing has the potential of calculating all possible combinations at once, and arriving at the correct answer in seconds. There are many, similar, optimization problems that would be solved with quantum computing. Think of optimizing resource allocation in a complex business, or indeed in an economy, and making predictions that can support the best strategies - or think of factorizing numbers in cryptography.

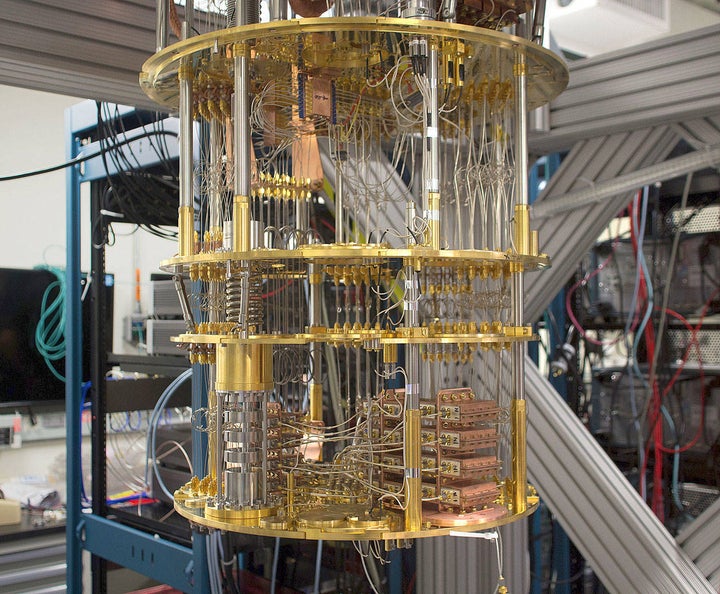

IBM’s Quantum Computer.

Quantum computers are evolving fast: we are now at the 50 qubits level. Let's put this number into prespective. A 32-bit quantum computer can process 4 billion coefficients and 265GB of information - which is not too impressive, you may argue, since you can run similar programs on a laptop computer in just a few seconds. But once we reach the 64-bit quantum computer limit the story changes massively. Such a computer can compute all the information that resides on the Internet at once, or 74 "exabytes" (billion GB) - processing that will take years to do on current supercomputers. And we are already very close to that! However, the real game changer will come once we develop a 256-bit quantum computer. Such a computer will be able to do calculations which are greater in number from all the atoms in the universe... Quantum computing is cosmic computing, and its repercussion on human civilisation can be massive and profound.