The human mind has a marvelous capacity for inventiveness. In our philosophy have been dreamt the plays of Shakespeare and the computations of Alan Turing, not to mention the staggering technology underlying the phone on which you are perhaps reading these words. And while we've taken great advantage of it, it turns out that this inventiveness is actually necessary for a more fundamental reason. Your brain is forced into being creative in order to perform the simple act of seeing the world around you.

Perception is a type of problem that mathematicians refer to as "ill-posed". Because of nothing more than light and geometry, a given image can have an infinite number of possible causes in the real world. Nonetheless, perception is a problem our brains must solve, so that we can find food, shelter, and each other.

Faced with this dilemma, the brain must resort to inference. Essentially, it must make guesses, albeit educated ones. One consequence of this is that while we all live in the same world, we don't always see it the same way.

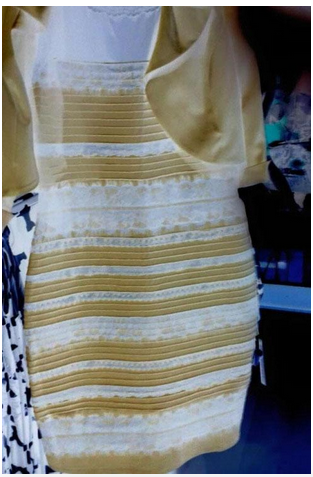

Naturally, this leads to the beautiful diversity of human minds, for both good and ill. Recently, upon the electronic distribution of a picture of nothing more than a dress, it saw the birth of the stalwart White-and-golders and the die-hard Black-and-bluers. This dress is a nice example of how what you see isn't necessarily what you perceive.

Let's take a look, shall we?

So what color is it? White-and-gold or black-and-blue?

Since we have access to the raw image, we can peak inside at the pixel values to find that the dress is (on average) composed of the following two colors:

So, go team "bluish-gray-and-brown"!

Determination of color is based on a complicated inference, involving surrounding colors, local brightness cues and shape. Ed Adelson has some wonderful and now relatively famous brightness illusions on his website, such as the checker shadow illusion.

Color interpretation relies on the same kind of contextual inference as brightness. In Bloj, Kersten, and Hurlbert (1999) the authors demonstrated that context inferred from depth could change the perceived color of an object. They showed subjects a card, half magenta and half white, folded along the divide like a bi-fold pamphlet, so that the right side appeared magenta and the left side appeared pale pink, owing to the reflected luminance. Subjects report exactly that. When viewed through a pseudoscope, which flips disparity cues so that the crease on the folded card appears pointed towards the subject, subjects report that the left side also appears as magenta, rather than pink. The reversal of depth destroys any possible interpretation of this luminance as reflection.

Humans also have strong built in assumptions about perceived light sources. In terms of brightness, humans have a "light-from-above" prior that determines how we often interpret shapes. Loosely speaking, the sun is above us, and this fact is used to determine the perceived shapes of surfaces. Notice how the figure below appears to be a "bump" coming out of the screen rather than a dimple. If you are reading this on a phone, try turning it around and see what happens.

In the case of the dress, one's assumptions about lighting have a strong impact on the perceived color. In particular, your perception will be affected by whether your visual system sees the dress as being in bright light or in shadow. Comic book colorist Nathan Fairbairn put together the following in order to illustrate these two different potential hypotheses about light and color in the picture.

So what happens if we try to remove contextual information? It so happens that these average colors are close to being inverses of one another. Inverting them gives us:

Inverting the colors in the original photo should approximately "swap" the two colors on the dress, as well as remove contextual information (or perhaps render it nonsensical). The color inverted dress looks like:

I see white-and-gold here, and I saw white-and-gold in the original. My wife is a die hard Black-and-bluer, and she sees the inverted dress as light-blue-and-gold. Notice that the image now has artifacts that look (to me anyway) like damage in an old photograph. This is a sample size of one, so I'm curious to know if this inversion changes the perceptions of any other black-and-bluers out there.

We know that training can alter the "light-from-above" prior, and it seems plausible that people's differing perceptions of the photo are due to their different experience, and in particular their experience with light, shading, material, and overexposed photographs.

Our brains have to make guesses, but they don't always make the same guesses, even though we live in the same world. One of the hardest inference problems our brains have to solve is figuring out how everyone else sees the world. Perhaps with some very hard work, I can be a Black-and-bluer, too.

--

Michael Buice is a scientist at the Allen Institute for Brain Science. His research interests are in identifying and understanding the mechanisms and principles that the nervous system uses to perform the inferences which allow us to perceive the world.

This post is part of a HuffPost Science series exploring the surge of new research on the human brain. Are you a neuroscientist with an insight to share? Tell us about it by emailing science@huffingtonpost.com.