Deep Mind, the London-based AI company owned by Google, have made a major breakthrough in machine learning last week, by announcing that AlphaGo Zero learned by itself, from scratch, and without any human intervention. Already artificial intelligence can do many things that humans cannot. But how far away are we from building "human-like" AI? What are the key problems that we need to solve before we get there?

Could she become real?

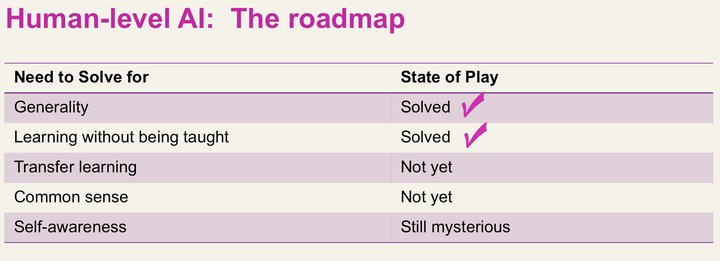

To answer this question I will propose that there are five milestones that must be conquered in order to machines to become as intelligent as humans: generality, transfer learning, learning without being taught, common sense and self-awareness. Let's look at them, in turn, and see where we stand today in the roadmap towards human-level AI.

Generality: this means that we have developed an approach, or a systems architecture that can be applied to any problem independent of the domain. I consider this problem largely solved. Probabilistic approaches to AI, such as deep leaning networks (as opposed to "symbolic", e.g. expert systems), have demonstrated generality. We can use the same deep learning networks and algorithms to bear on virtually any problem that is a good use case for machine learning.

Learning without being taught: this is what Deep Mind have achieved with AlphaGo Zero. By tweaking and simplifying their original reinforcement learning approach that they had used with the first AlphaGo they demonstrated how a neural network that is given a goal (e.g. "to win") can learn by itself and invent strategies for achieving that goal. It is a major breakthrough, and it has brought us a big step closer to human-like AI.

Transfer learning: this means that a system can use, or abstract, the knowledge it has accumulated by solving a specific problem, and apply this knowledge in solving a different problem. This is something that we humans do naturally. We "see patterns" and "similarities" in problems, and we apply heuristics and accumulated “experience” to solve them. We are not there yet in AI; although there seems to be at least one promising path to achieving transfer learning in machines, by combining probabilistic and non-probabilistic ("symbolic") approaches. For example, imagine a system that can detect the steps that its neural network takes in solving a given problem and translates them into a heuristic algorithm; then, generalizing this domain-specific heuristic algorithm, and using it to drive the neural network into solving for a different problem.

Did he get wet to get that medal?

Common sense: this is a really hard problem. Take for example the sentence "Michael Phelps won the 400m butterfly gold medal in the Beijing Olympics". When you read this sentence you instantly, and implicitly, assume a long list of things; for example, that Phelps got wet in achieving the medal, that he had to take his socks off before he went into the pool, etc. This association of logical hypotheses to the original statement is extremely hard to code affectively in a computer. We are still a long way from solving for common sense. But, a good start, would be to look into what neuroscience can teach us about the way we form, retain and use memories. The function of human memory is perhaps the key to developing approaches for common sense in machines.

Self-awareness: this level of "consciousness" is still mysterious in humans, although there have been several breakthroughs by neuroscientists in understanding more what happens in the human brain when we become aware of something, i.e. when an "I" - or a "self" - emerges and we have subjective experiences. For many, high-level consciousness is perhaps the "last bastion" of humanity in retaining some kind of superiority over the intelligent machines of the future. Nevertheless, creating machines that mimic self-awareness may not be impossible. I say "mimic" because, unless we find an objective way to measure human consciousness, we will forever be unable to conclude whether a machine is "truly" conscious or not. Machines that will have us believe they have a self, or a personality, should be relatively easy to develop. But whether they would be truly self-aware, we will only know if we crack the "hard problem of consciousness" first.