Facebook will make us do anything it wants!!!!!!!

No doubt you have seen these headlines, amongst others, in your own various newsfeeds and such over the past couple of weeks.

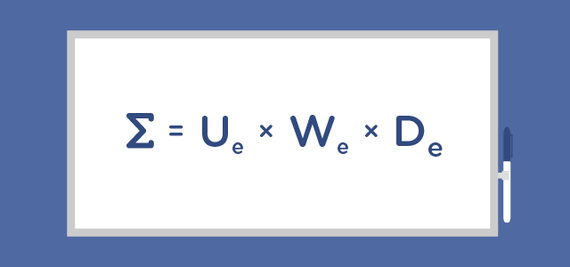

And of course you have read about the ALGORITHM that drives all of that scary and potentially dangerous behavior.

Personally, I followed the narrative with some amusement, tweeting some of the stories and wondering why so many of the writers and analysts didn't seem to really understand what an algorithm is and isn't.

The Guardian, back in July of 2013, presented an article titled "How Algorithms Rule the World."

In the piece they quote Dr. Panos Parpas, a lecturer in the quantitative analysis and decision science ("quads") section of the department of computing at Imperial College London, who, amongst many important points, said, "As technology evolves, there will be mistakes, but it is important to remember they (algorithms) are just a tool. We shouldn't blame our tools."

The point being that many were already worrying about what damage an "errant" algorithm could cause, and the biggest part of the problem seems to be that we think algorithms have a mystical, holy, omnipotent power...more on that in a minute.

On July 15, 2008, I was asked to testify in front of the House Committee on the Judiciary Task Force on Competitive Policy and Antitrust Laws hearing on Competition on the Internet.

It was like a movie set...the members of Congress in front of me...the press behind...and there I was reading my testimony on the topic of media monopoly - in this case, a proposed merger of Yahoo and Google. But then I "tweaked" the algorithm - so to speak:

So why am I worried about the proposed deal between Google and Yahoo?

On the most basic of levels my American, anti-monopoly hackles have risen as the market share that such a deal would represent will eliminate any notion of free and open enterprise. It is an agreement that would create fixed prices, destroy a, currently, competitive market and it would virtually eradicate any sense of auction style bidding.

However, I believe, that is only a part of the issue and I know that you have covered this part of the topic in great depth. Allow me to take a slightly different tack.

Search is all about the algorithm, and the algorithm is all about control. And, if you control the algorithm you effectively control the information it presents. Think about it - by restricting or pushing potential search results - at the most benign level - Google will have even more influence on pricing - bringing up or suppressing topics at will. At the more Machiavellian level, do we really want Google controlling the answers to everything and anything we ask?

Think about it. With few other search options and the built-in lethargy and inertia that Web users portray when it comes to switching, a monopoly in this arena is ill advised. I don't believe that any single entity should ever wield that much power, influence or control.

So while my objections begin with the notion of monopoly, it is my fear of what Google or any company could do with that position of unbridled power that makes me oppose the proposed partnership/merger.

What was most interesting to me were the many calls I received afterwards from the various congressional staff who wanted to learn more, and when I pointed out to them that even members of Congress could be erased by the "algorithm"...I bet you can imagine their reactions.

Again - I was trying to make them understand that the danger is the tool wielded by the wrong people that was a frightening prospect - not just some vague notion of some amorphous entity lurking behind our lives like the sentient evil in some horror flick.

Less than a month ago, The Atlantic made it clear: "Go Tweak Yourself, Facebook":

Talking about social-network service changes as mysterious changes to algorithms turns software companies into false idols...As I argued last year, talking about big, complex companies and their services as mere "algorithms" amounts to a theological position. It fashions a cathedral of computation at whose altar Internet users supplicate. In that piece, I suggested replacing the term "algorithm" with "God" when you read it in the press. Facebook could tweak its God to stymie a Donald Trump presidency! Merely mentioning the vaunted "algorithm" raises its station, fetishizes it, treats it as a totem.

The article comments on changes and tweaks to the algorithm:

Another update, this one purely hypothetical, concerns the company's hypothetical ability to affect the outcome of elections by altering its news feed - to prevent a President Trump, for instance...

When you read about them in the news, these changes take on a dramatic tenor, almost a cosmic one. "While there's no evidence that the company plans on taking anti-Trump action," Trevor Timm wrote at The Guardian about Facebook's ability to influence elections, "the extraordinary ability that the social network has to manipulate millions of people with just a tweak to its algorithm is a serious cause for concern." And writing at Slate earlier this year, Will Oremus warned that "Facebook's news feed algorithm can be tweaked to make us happy or sad."

Make us happy or sad? Get us to vote for Donald Trump or whomever?

Or how about this:

From Tech Insider, "Amazon just showed us that 'unbiased' algorithms can be inadvertently racist":

A Bloomberg report Thursday revealed that Amazon's same-day delivery service offered to Prime users around major US cities seems to routinely, if unintentionally, exclude black neighborhoods...

Google's image-recognition software was found to tag black people with a racial slur. Searches for typically black names have turned up ads for services that let you look up arrest records. Search images for the identity "Latina" (without SafeSearch) and you'll encounter a whole lot of porn. Facebook, Google, and Amazon don't intend racism or malice, but relying too heavily on data and algorithms can produce racist and malicious results.

And here is the key:

The idea is that if you can feed enough discrete facts into a decision-making process, whether technological or corporate, you can not only make the most profit-maximizing decisions but erase the evils of human bias and malice.

But the problem with this thinking is it ignores the realities that there are biases involved in building any data-driven analysis, biases involved in what data gets included in the analysis, and biases inherent to a world scarred by centuries of ongoing racism and other bias. The algorithms don't self-assemble. People make them.

People make them...

And people have been making them since the Babylonian era some 1,600 years before the Common Era.

The very word "algorithm" dates back to Muḥammad ibn Mūsā al-Khwārizmī (Arabic: محمد بن موسى الخوارزمی; c. 780 - c. 850) (Arabic pronunciation: [ælxɑːræzmiː]), formerly Latinized as Algoritmi, a Persian mathematician, astronomer and geographer during the Abbasid Caliphate, a scholar in the House of Wisdom in Baghdad. Al-Khwārizmī's work on arithmetic was responsible for introducing the Arabic numerals, based on the Hindu-Arabic numeral system developed in Indian mathematics, to the Western world. The term "algorithm" is derived from the algorism, the technique of performing arithmetic with Hindu-Arabic numerals developed by al-Khwārizmī.

There is no doubt that Al-Khwārizmī had different objectives for his scholarship than pushing a trending story, suppressing one or even getting it going...

And there is no doubt that Facebook never intended to run a blind algorithm as reported this week by The New York Times, "Facebook 'Trending' List Skewed by Individual Judgment, Not Institutional Bias":

Facebook enlisted a set of 20-somethings as curators, copy editors and team leads, charged with sifting through the material the algorithms unearthed. They were crucial, they were told, to improving Facebook's ability to discern, over time, what constitutes news.

"Even if you want to have computers do everything, for technical reasons, resource limitations and product positioning, you may want humans to oversee the algorithms," Jonathan Koren, a former Facebook employee who worked on algorithmic ranking for Trending Topics, wrote in a LinkedIn post this week.

But a legion of 20-year-old sifters wield way more influence and power than a software loop and to that end, as reported, Facebook is upping its training as this is where the rubber really hits the moral/ethical road in our world.

Yet it's the engineers who play a role here as well.

According to The Washington Times: "Facebook's algorithm only as unbiased as its creators" -- "It turns out the algorithms that govern the internet can be as biased as the people who create and update them."

In other words, too much Digibabble...

And there you have it.

Truth is, I'm always on my soapbox of People First and here right before our very noses are the very folks who like us to think that they can automate it all, for our own benefit, in a truly neutral and open manner...

Bottom line...there is no objectivity in an algorithm.

Listen:

"How can they be?" asks Mark Hansen, a statistician and the director of the Brown Institute at Columbia University:

"They're the products of human imagination."

That's the good news and the bad. As I have written before - the digital world is only as good as we make it.

Take a look at some of the other algorithm-biased controversies:

What do you think?

Read more at The Weekly Ramble