"I can't open the file you sent," she told me. "My computer's saying 'invalid file type.'" I double-clicked the file on my desktop again, and lo and behold, it opened as usual for me. "Let me send it again," I tried to say -- but she cut me off. "My laptop's been acting weird anyway," she sighed. "I'll just look this over at the office tomorrow."

Not much of a film noir plot, I admit -- but even so, it's a story whose unpleasant twists we know all too well: The tale of the delicate dance known (in certain circles) as interoperability.

The idea, in a nutshell, is that computer networks have problems. For all their famed speed and precision your office computer can't always render a page of text the same way as the computers down the hall; they can't always open a file created in an older program; can't seem to show you the same layout of buttons and menus that you're used to seeing at home. The better two different computer systems can exchange information and put it to use, on the other hand, the more "interoperable" they're said to be.

Since computers have evolved from room-sized mainframes into menageries of smart phones and tablets and office desktop machines, interoperability has become a watchword for forward-thinking communications experts. Nowadays, those experts often refer to "unified interoperability" -- an idealized structure for communications (an abstract informational structure, that is; not a physical one) which would seamlessly integrate data across multiple platforms and operating systems, among data types like text, video, audio and SMS, and throughout company infrastructures of all shapes and sizes. "The purpose," says one article on the topic, "is to transition from one mode of communication to another and combines the different means in one session."

If this sounds like an ambitious goal, that's probably because it sounds pretty different from our everyday experiences with computers: Files (as we know them) fall into distinct types -- not unlike flowers or cars -- and each of those types has some kind of specific purpose; a program with which it's associated. Another handy feature of files is that they can be dropped into folders, copied, deleted, and so on. This sort of system has worked reasonably well for home computer interfaces, but it's actually quite different from the way our brains organize our memories.

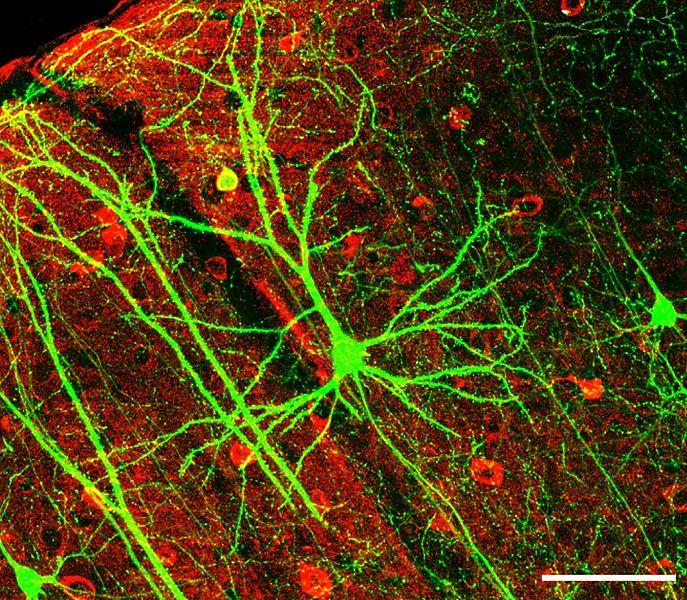

When you run across a new sight or sound -- or a scent; say, the whiff of an unfamiliar perfume -- clusters of neurons throughout your brain light up with whirlwinds of electrochemical activity; a bit like a self-perpetuating fireworks show. The next time you experience a smell similar to that first one, many of those same neuron groups will light up again -- but since you never smell exactly the same smell twice, they'll light up with a slightly different fireworks performance than before. Over the years, as you smell the same (or similar) scents, neurons in your brain's visual lobes, your emotional and memory centers, and lots of other areas will learn to link themselves together for this performance -- and they'll even learn to fill in gaps if some of the fireworks fail to go off.

As you can probably tell, this is a very simplified version of how your brain learns. But my "fireworks show" metaphor gets at one of the most important ways our brains differ from computers: Instead of storing information in files, they store it in brain-wide patterns of multimedia connectivity -- smells, sounds, feelings and even abstract concepts are all linked seamlessly into neural behavior patterns we call "thoughts," "memories" and "ideas." If your brain was a computer network, its components would have an almost perfect unified interoperability.

In brains and in business, our communication structures -- physical and otherwise -- shape our communicative abilities. In fact, according to one recent study, certain connectivity patterns in an infant's brain can predict that baby's language skills a full year down the road. A team led by Dilara Deniz Can and Patricia Kuhl scanned the brains of a group of infants, using a cutting-edge imaging technique called voxel-based morphometry (VBM). These VBM scans gave the researchers some clear views of the infants' developing gray matter -- the dense bodies of nerve cells where most information processing happens -- and white matter -- the high-speed connection fibers that link gray-matter areas.

When the researchers brought the same kids back to the lab a year later, they found that certain infants appeared to comprehend many more words, and babble much more intently, than the others. These consistently turned out to be the kids who, as infants, showed much more gray- and white-matter concentration their cerebellum and hippocampus. When it came to verbal memory, these babies seemed to be wired for success.

The truth, though, isn't nearly so clear-cut -- and it's important to emphasize what this study does and doesn't demonstrate. It shows a linkage - not a clear cause-and-effect relationship - between gray- and white-matter concentration and very early verbal abilities. That doesn't mean that lower concentrations limit verbal ability -- a child can potentially lose half her brain and still walking around speaking in complete sentences. By the same token, a one-year-old's tendency to listen and babble may not predict much of anything about later verbal skills; as I explain in this HuffPost article, plenty of highly intelligent children barely utter a word -- or show obvious interest in what others say - until they're several years old.

What this study does remind us, though, is that no part of the brain works alone. Because the strange thing is, neither the cerebellum nor the hippocampus -- the two areas where the future verbal geniuses showed such strong development -- is considered crucial for speech. Neuroscientists typically link our language skills with areas much higher in the brain, which evolved millions of years after the two culprits in this study. Even our most advanced mental skills, it seems, depend on brain structures more ancient than the dinosaurs.

If, someday in the not-too-distant future, we engineer a worldwide computer network of near-perfect unified interoperability, will its informational structures and methods look anything like those we're just beginning to decode in our own brains? Or will the uses and goals of a data network will make these artificial systems too alien to qualify as "intelligent" in any human-created scheme of the world? Even if so, interoperability may well be a concept these systems would appreciate, in their own way - as we do, when we discover it in ourselves.