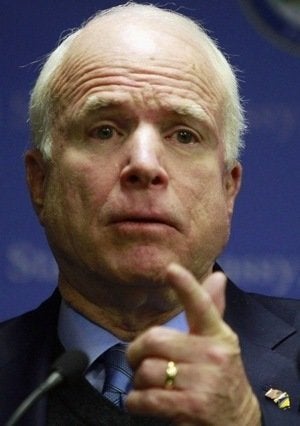

WASHINGTON -- Sen. John McCain (R-Ariz.) has raised many objections to a repeal of the military's controversial "Don't Ask, Don't Tell" policy, but this morning he added something new: criticism of the polling methodology behind the massive Department of Defense survey of servicemembers and their spouses released earlier this week. Speaking at a hearing of the Senate Armed Services Committee, McCain complained about the survey's sample size and response and coverage rates:

In addition to my concerns about what questions were not asked by this survey and considered in this report, I'm troubled by the fact that this report only represents the input of 28 percent of the force who received the questionnaire, including completely leaving out a numerous members of the military in combat areas. That's only six percent of the force at large. I find it hard to view that as a fully representative sample set.

Does this criticism have merit? Not according to the standards of modern survey research and the detailed description of the methodology included in the Defense Department's survey report.

Let's take a closer look.

The first element of McCain's critique is one we often hear about sample surveys. How can you fairly represent the views of a large population by interviewing only few hundred or a few thousand respondents? Random sampling may seem illogical, but as anyone who has taken a basic statistics class should know, obtaining a representative sample has far less to do with the size of the sample than whether it is randomly drawn and demonstrably representative and unbiased.

That said, McCain's complaint that the DoD survey reached "only" 28 percent of sampled respondents is ironic -- and misplaced -- given that DoD completed an enormous number of interviews as compared to conventional phone polls. The DoD survey involved 115,052 completed interviews among service members on active duty or in the reserves, and 44,266 completed interviews among spouses of active duty or reserve personnel (a sample size attainable because respondents filled out surveys online, rather than completing them with the assistance of an in-person or telephone interviewer).

As Defense Department General Counsel Jeh Johnson pointed out during this morning's hearings, the recent Pew Research Center national survey on Don't-Ask-Don't-Tell sampled far fewer respondents (n=1,255 adults) even though it was measuring the opinions the much larger U.S. population.

Another irony is that the 28.2 percent response rate achieved for active duty service members was in line with previous surveys of the military and far better than what most conventional telephone surveys currently obtain. That response rate fell within the "normal range" for Defense Department surveys, according to the testimony of Army Gen. Carter Ham this morning. But it is actually far better than the response rate obtained by last month's Pew Research Center

DADT survey, which according to their survey director Scott Keeter, had a response rate of just 10% using a comparable calculation (the AAPOR1 response rate formula).

As Keeter points out via email, studies conducted by the Pew Center and similar efforts by academic survey researchers have shown that the response rate alone "is not a good measure of survey quality or representativeness…surveys with low response rates can produce results that match up very well with externally validated parameters." He points to their final pre-election survey last month, which accurately predicted the national U.S. House vote despite an 11 percent response rate for the landline sample and 8 percent for the cell phone sample.

The second element of McCain's critique is that the survey "completely [left] out numerous members of the military in combat areas." That contention is not supported by the detailed description of the methodology included in the Defense Department report. According to the report's methodological appendix, the "target population" of the sample of active-duty members included all "members of the Army, Navy, Marine Corps, Air Force, and Coast Guard, up to and including pay grade O-6 with at least 6 months of service as of June 15, 2010." It makes no mention of any systematic exclusion of combat service members.

The survey also provided service members ample time to participate. The first solicitations were sent out via email and postal mail on July 7, and those solicited had until August 15 to complete the online questionnaire. Those who did not respond received as many as five reminder notices, including two sent by both email and postal mail and three more by email only.

Would a field period of more than six weeks allow service members doing combat duty sufficient time to participate? David Wilson, a political science professor and regular HuffPost Pollster contributor who is also a 20-year Army veteran -- including service in Operations Desert Storm and Iraqi Freedom -- says yes. He writes, via email:

Military personnel all have service related email addresses, as well as other online communications that they rely on to do their jobs effectively. Almost every solider checks their email on a regular basis, regardless of whether they are deployed in hostile theaters of operation such as Iraq or Afghanistan, or are serving as reservists or national guardsmen who meet one weekend a month. Thus, it is virtually impossible for any randomly selected service member to not have known they were asked to participate.

(Note: Wilson supports repeal of "Don't Ask, Don't Tell" and posted his thoughts on the issue earlier today).

Moreover, because the Department of Defense drew their sample from Department of Defense personnel files, they had unusually rich data available (as compared to conventional telephone surveys) to monitor and correct for statistical bias that might result if some service members responded more readily than others. They actually divided their active duty sample into 200 separate subgroups based on data tracked in the personnel files: service level (Army, Navy, Marine Corps, Air Force, Coast Guard), pay grade (five levels), location (U.S. territory and overseas), family status (two levels) and -- most relevant here -- "duty occupation group" (combat and combat support).

When the survey was complete, the DoD researchers weighted each subgroup* by the inverse of its response rate so that the full sample would be representative on all of these characteristics. They then weighted the full aggregated samples by eight variables to match "known demographic totals" calculated from the personnel files.

Put simply, if service members in combat areas were less likely to respond, that difference would not skew the representation of combat troops in the final weighted results. Further, the rich data from the personnel records gives the DoD researchers powerful tools to control for non-response bias, more powerful than what opinion pollsters use to weight the surveys that our political leaders frequently cite and procure for their own campaigns. McCain's concern that the Defense Department's DADT survey was not "fully representative," especially on the issue of those assigned to combat duty, is misplaced.

We sometimes overstate the precision of pre-election polls that are often more art than science, but the methodological rigor of the DOD survey puts it in a much different class. "The bottom line," David Wilson explains, "is that this is one of the most scientifically representative studies the military has ever done."

**According to the report, a few of the strata cells had to be combined due to a small number of responding cases.

Follow Mark Blumenthal and HuffPost Pollster on Twitter