What’s the difference between walking a neural network -- a program that’s modeled to approximate brain activity -- and walking your dog?

Neural networks don’t poop.

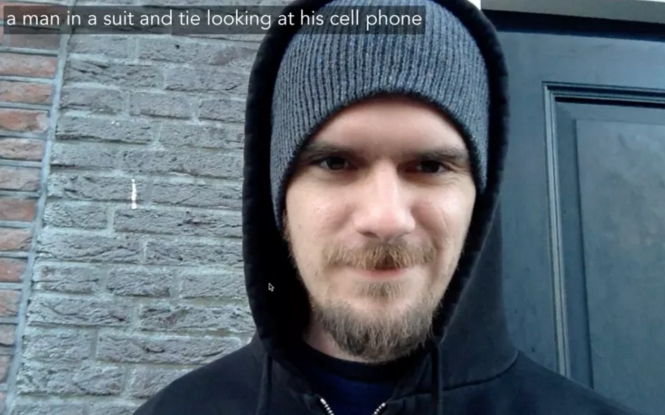

A few days ago artist Kyle McDonald posted a video of him taking an artificial neural network, operating on his laptop, for a walk around Amsterdam. On that walk, the network recognized a lot of what the webcam was seeing and described those sights in real-time.

McDonald tweaked a program that uses a neural network to create captions so that it’d run on his webcam, and took his laptop out for a stroll “near the bridge at Damstraat and Oudezijds Voorburgwal in Amsterdam.”

Neural networks can be used for all sorts of things: they can invent bad recipes, teach computers chess and recognize and tag images. Recognizing things in real-time isn’t a new application for neural networks, but it’s cool to see how relatively effective this one is at the job. For comparison (on, admittedly, an odd sample) check out this neural network trying to recognize objects in the intro to Star Trek: The Next Generation:

While McDonald’s neural network still makes a bunch of errors:

They’re often kinda funny:

And generally it does get a lot of things right, or close to right. Like bikes:

Or people:

The whole thing is very worth watching, if only to get a sense of what the program is good at recognizing, and how light, speed, and movement affect its work.

Also on WorldPost: