The concept of the insanity defense dates back to ancient Greece and the Roman Empire. The idea has always been the same: Protect individuals from being held accountable for behavior they couldn't control. Yet there have been more than a few historical and recent instances of a judge or jury issuing a controversial "by reason of..." verdict. What was intended as a human rights effort has become a last-ditch way to save killers (though it didn't work for James Holmes).

The question that hangs in the air at these sort of proceedings has always been the same: Is there a way to make determinations more scientific and less traditionally judicial?

Adam Shniderman, a criminal justice researcher at Texas Christian University, has been studying the role of neuroscience in the court system for several years now. He explains that neurological data and explanations don't easily translate into the world of lawyers and legal text.

Inverse spoke with Shniderman to learn more about how neuroscience is used in today's insanity defenses, and whether this is likely to change as the technology used to observe the brain gets better and better.

Can you give me a quick overview of how the role of neuroscience in the courts, has changed over the years? Especially in the last few decades with new advances in technology.

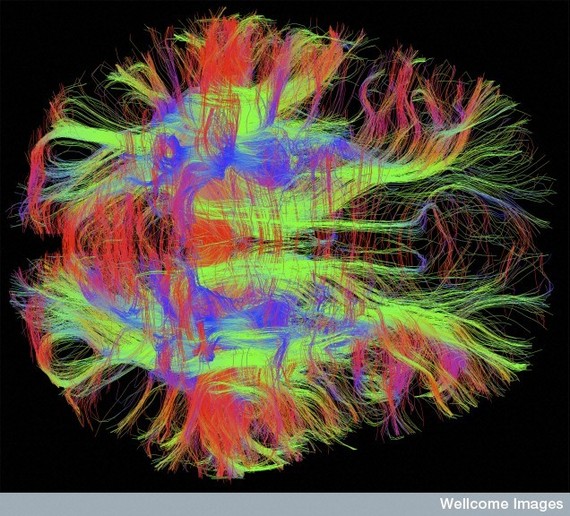

Obviously, [neuroscientific evidence] has become more widely used as brain-scanning technology has gotten better. Some of the scanning technology we use now, like functional MRI that measures blood oxygenation as a proxy for neurological activity, is relatively new within the last 20 years or so. The nature of brain scanning has changed, but the knowledge that the brain influences someone's actions is not new.

I don't know how familiar you are in the case of Charles Whitman. He was the Texas Belltower shooter in 1966 who killed over a dozen people on the campus of University of Texas, Austin, after killing his mother. He sort of intuitively knew that something had gone wrong with him, so he asked in his suicide note that his brain be examined during his autopsy for irregularities. They actually found out that he had a tumor pressing on his frontal lobe, which may have been a significant cause in this aberrant behavior.

Neuroscience certainly played a growing role in courtrooms from then on. There was a big 2007 New York Times Magazine article called, "The Brain on the Stand," that got people very interested in the notion that the brain would radically change the way criminal cases are tried; that it would radically change the conception of why people do what they do.

But, you tend to find that this neuroscience is coupled with the study of psychopathy, and people aren't really sympathetic to psychopaths.

That makes sense.

The other, bigger problem is that the insanity defense isn't sort of what you might think of colloquially as insane. In most jurisdictions, it has to do with the knowledge of what's right versus wrong. So if you knew what you did was right or wrong at the time you did it, you aren't legally insane. So you tend to find that the very rare case where it is successful is like a paranoid schizophrenic who is completely in the state of delusion, and didn't know it was wrong because they thought they were killing ants, not people.

It must be extremely difficult to prove that sort of state of mind.

The insanity defense has little to do with the ability to sort of control your actions. We still haven't seen really much of an effect of neuroscience on the insanity defense -- in part because the insanity defense is rarely offered and even more rarely successful. Contrary to the popular myth that people plead insanity all the time and then it works and they're back out on the streets, it's just rarely offered because criminals don't really want to be labeled insane. And juries, because of the potential misconception that you get to walk away and there's no repercussions for people who are deemed legally insane, very rarely find anyone legally insane. So neuroscience has had less of an impact directly in the insanity defense.

Insanity plays a bigger role in sentencing, rather than convicting. The insanity defense is more used to mitigate punishment rather than exculpation via insanity.

Do you see that moving in a different direction in any way in the next few years or in the next several years? Is the role of neuroscience in the insanity defense going to stay this way, with an emphasis in sentencing rather than determining guilt?

The champions of neuroscience said, 'This is great, look at neuroscience is in the Supreme Court!' Some of us sort of said, "that's great, but it's really just a sort of crutch for a decision that they already wanted to come to on things we already knew." There's a reason insurance companies don't lower your rates until you're 25; there's a reason that, you know, all sorts of things. You can't rent a car until you're 25 because we knew that brain development wasn't fully formed in minors and people that are under 25 made worst decisions, they're more impulsive, etc. I mean, sort of when I teach this stuff, I say, you know, 'How many of your parents know you make bad decisions 'cause you're teenagers?'' Every parent knows that teenagers make bad decisions, so it wasn't really any novel insight that this neuroscience that was submitted by the APA to the Supreme Court in a brief really shed light on. But, it was in a way to sort of bolster their decision. At the time -- this was about 2005, I believe, maybe a couple years later -- sort of used what was popular. Neuroscience was very popular.

I think so. In research I did with a colleague that was published in Plos One, we looked at a phenomenon in social psychology called 'motivated reasoning,' which is where people sort of assimilate information in biased ways to come to desired conclusions.

So science is popular with juries. But don't they struggle to interpret it? After all, it's not like jurors can be expected to have an applicable background.

We made up a bunch neuroscience studies. They weren't real, but they were plausible, about the death penalty and about abortion. We basically showed participants how these supposed neuroscience studies back up the notion that either the death penalty was or was not a more effective deterrent to crime than life without parole or any other sentence. And we asked people to rate the studies.

We looked at whether the participants' prior attitudes were a significant predictor of how they dealt with the neuroscience data, and it turned out that it was. People who were pro-death penalty rated the study really well when it said that the death penalty was a deterrent and really bad when it said it wasn't a deterrent. People who were anti-death penalty -- sort of a flip. When we said the death penalty was a deterrent and the neuroscience data supported this, they said 'Oh, that's bad science, that's biased reporting, the researcher has an agenda,' and all this stuff.

All of this is to say that people's prior attitudes seems to be one of the biggest determinants in how they evaluate neuroscience. If they agree that criminals are the worst and criminals should be put to death and all of this kind of very harsh-on-crime attitude, then if you give them neuroscience that says, 'Well, he's really not that responsible. He's not that bad a guy. It's his brain that made him do it.' They're simply going to say, 'Aw, that's bad science. That's BS, I don't trust it, it's biased. I know what I know. Your science is flawed.'

In some sense, neuroscience is still just telling us a lot of what we already knew from psychology and just from common sense. In Graham vs. Florida, the Supreme Court said, "look, neuroscience tells us that the brain isn't fully formed in minors and therefore they're not of the most, you know, culpable class of offenders. So we can't sentence them to life without parole for non-homicide crimes because that's sort of reserved for among the worst of the worst of offenders. And these people can be changed because their brain still allows them to change."

The champions of neuroscience said, 'This is great! Neuroscience is in the Supreme Court!' Some of us instead said, 'That's great, but it's really just a sort of crutch for a decision that they already wanted to come to on things we already knew.' There's a reason insurance companies don't lower your rates until you're 25. You can't rent a car until you're 25 because we knew that brain development wasn't fully formed in minors and people that are under 25 made worse decisions. Every parent knows that teenagers make bad decisions.

When it comes to neuroscientific evidence being presented in court, this is almost exclusively data in terms of imaging, correct? Or are there other ways to gauge brain activity?

Where neuropsychology is involved in the court system, some psychologists do scanning, while others have tests where the individual sits down and does tasks and it'll tell something about the functioning of their brain. For instance, if you do poorly on one task, it tells the psychologist you have problems in say your frontal lobe or whatever. If you do poorly on another task, maybe it's on facial recognition of expressions and that tells them something about your inability to relate to expressions of emotion or something that tells about a different part of your brain.

Scanning has been the focus, but there have been instances where people who have done scans have been allowed to testify but the scans themselves have not been admitted. This is in part because of the seductive allure of neuroimages. One study from many years ago showed how neuroimages have this fancy effect on people. It bamboozles them. That study was never replicated, and the results were perhaps just due to the participant sample that one experiment got. But it was causing judges to be wary of allowing the images themselves, even when they did allow the expert to testify at sentencing.

In the Brian Dugun case in Chicago, psychologist Kent Kiehl was brought in to testify about Dugan's brain activity. He was only allowed to use pictures of brains with x's drawn on areas where he found lower activity in Brian Dugun's brain, because the judge was worried that if he let him bring in multi-color images from the fMRI, the jury would be confused and just sort of agree with Kiehl, and all the jurists would just forget their responsibility to weigh all the evidence.

So it's sort of a mix. I had some unpublished evidence that suggested that imaging wasn't really key -- it was really the analysis at the brain level rather than at the behavioral level. There's a belief that at the behavioral level you can fool a psychologist, but it's harder to fool a psychologist at the brain level, even if they're not conducting a scan.

If the tools used to measure brain activity or track what's going on inside a suspect's or defendant's head -- if all of that were to converge into a kind of a simpler and more universal method that the legal system can trust, would we finally be able to kind of come to a place where we can determine whether a criminal is sane or insane? Or are there too many factors and ambiguities in play?

I think there are always going to be ambiguities, for a number of reasons. Again, you come back to the sort of legal definition of insanity. It's never going to really tell them the question of right from wrong. I don't think anyone anymore believes the brain is so mechanistic where a brain scan is gong to tell you, "well, he absolutely was bound and determined to do this because he had lower activity in his pre-frontal cortex or in his frontal lobe."

You are maybe slightly more likely to engage in antisocial conduct if you find yourself in a situation where that person's brain had less activity in key regions. There's a professor at the University of California, Irvine, where I did my Ph.D, named James Fallon. He actually did an opening of an episode of Criminal Minds where he was giving a lecture on psychopaths. He scanned his brain and his brain looks exactly like a psychopath's. And he has other characteristics that fit. He's got a decreased prefrontal cortex activity; he's got a lower resting-heart rate, and all these sorts of things that are supposedly markers of things that predispose you to violence. But he's not violent. I believe he's married and has kids, and he was a professor at UCI for decades until he retired. And he's sort of still there, teaching classes for a bit of extra fun and doing some research. But this guy's never really had any run-ins.

The psychologist Adrian Raine found out he has sort of the same markers that suggest, from his research, that he should be predisposed to antisocial or criminal behavior. But, again, he's a college professor. He's not antisocial, he isn't engaging in a life of crime or anything like that. It's all so probabilistic. So many other factors go into whether somebody is going to commit a crime or not that we could never find ourselves in a type of Minority Report situation where you can scan somebody and tell whether they'll commit a crime.

We'll never be able to scan someone after the fact, either, and say, "well absolutely, his brain is what made him do it," because the question of criminal behavior is so much more complex than that. It's sociological, it's economic, it's perhaps brain- and genetic-based, etc. I don't think you're ever going to be able to say, "yes, he can never overcome this impulse," because, again, it's probabilistic. There's going to be a guy out there with the same brain chemistry who will have overcome all the impulses and lead a completely productive life.

The last thing I'll point out is research that my colleague Cory Clark published in 2014. It showed that people still believe in free will despite evidence to the contrary, in part because of the desire to punish. She found that people believe in free will not just because of free will in the abstract, but because it helps them justify their desire to punish people for bad conduct.

It comes back to motivated reasoning -- the notion that people cling to things that help them justify actions that they want to take. I think people are going to cling to the notion that somebody still had a choice in part because we want to punish people for bad actions.

Photos via Zeynep M. Saygin, McGovern Institute, MIT / Wellcome Images

Also on HuffPost: