The New York City Police Department’s facial recognition program has courted controversy from the moment it was created in 2011 — civil libertarians and residents of the city didn’t want the largest police department in the country to become an all-seeing panopticon. But the NYPD has always insisted suspect photos are only checked against mugshots, not images from other government agencies, like the Department of Motor vehicles, not images from the traffic cameras or against security footage, and certainly not pictures from social media.

In two recent cases, however, official documents from the NYPD Facial Information Section (FIS) obtained by HuffPost indicate that social media photos were used to generate a match for a suspect who was later arrested and charged.

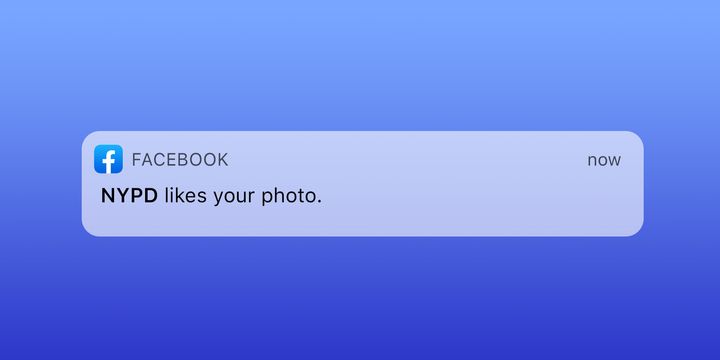

If the department is indeed placing social media images into its facial recognition database, New Yorkers who post photos on Facebook or Instagram might be at risk of becoming suspects in a criminal investigation, since the NYPD uses facial recognition hits as starting points for criminal probes. Given the well-known inaccuracy of facial recognition software, both the innocent and the guilty could be swept into the department’s digital dragnet.

In one FIS document obtained by HuffPost for a 2016 assault where a juvenile was charged, the “search results section” has a box for “source of image” — and it says “social media.” In another field on the form for “social media,” an investigator who filled it out noted “(FB)” — which further suggests the source of the photo used to generate a match in this case was Facebook. Jerome Greco, the defendant’s public defender from the Legal Aid Society, said that the documents were turned over during the normal course of discovery.

Greco, who specializes in digital forensics investigation at Legal Aid, said that typically the facial recognition unit forms included the term “photomanager” in the source of image field, to indicate that it came from the NYPD’s mugshot database.

The photo attached to the search results was a selfie of a person with a hoodie up who is leaning on a couch, according to Greco. “We believe that this was taken from a social media profile,” he said.

A similar situation occurred recently in the Bronx. Sidney Thaxter, a Bronx Defenders attorney and digital forensics specialist, was defending a client charged with robbery. Thaxter said the Bronx district attorney’s office turned over a package of discovery from the NYPD that included the same FIS search results report: “SOCIAL MEDIA/FACEBOOK” was listed as the source of image under the search results.

It’s not clear how the social media images would be making their way into the facial recognition database, though it wouldn’t be the first time that unauthorized pictures turned up in the FIS system. In August 2019, I reported in Medium’s OneZero that in another one of Bronx Defenders’ cases a sealed mugshot was discovered in the facial recognition database — a clear violation of a New York state law which dictates that sealed photos be destroyed or returned to the subject.

These documents appear to be straightforward proof the NYPD is inputting social media images into its facial recognition database — though the department denies it. “The limited information that is visible on these forms indicates that FIS did not utilize facial recognition technology to identify the suspects in these cases. Instead, they were able to determine the identity of the suspect using information that was available in publicly accessible social media accounts,” said Devora Kaye, an NYPD spokesperson.

“It doesn’t make sense that FIS — the unit dedicated to facial recognition — is gathering social media evidence instead of doing facial recognition.”

- Jerome Greco, public defender at the Legal Aid Society

There is a separate department within the NYPD, the Social Media Analysis & Research Team (SMART), which “analyzes social media for chatter, videos and relative information in regards to active investigations.” And department officials have said in the past that once the FIS generates a match, an “investigator proceeds with further research, including an examination of social media and other open-source images,” as then-NYPD commissioner James O’Neill wrote in The New York Times last year.

But the public defenders who have seen these documents sharply dispute the NYPD explanation that these social media matches were generated manually — they say the paperwork makes clear it was an FIS database match.

“The materials labeled the database photo as being from social media,” said Thaxter. “So while it may be true that the photograph was originally obtained through a publicly accessible account, the photo would have had to have been pulled from that account and placed in the database to be located using FIS — which is exactly what the paperwork says happened.”

“It tells me that their database of photos does not just contain photos of individuals that have been arrested or convicted,” he added. “Somebody is making a decision to put individual photos into this database.”

Greco agreed. “It doesn’t make sense that FIS — the unit dedicated to facial recognition — is gathering social media evidence instead of doing facial recognition when there’s an entire unit known as SMART that does that exact work,” he said.

Greco provided HuffPost an intelligence report from the SMART team that he received in another case. In this case, the form indicates that the SMART unit was contacted by a detective to conduct a “social media canvas” of an “FB user.” In a different juvenile case where social media was searched, the department’s Juvenile Justice Division provided Thaxter a form showing that the New York City law department, the city’s legal division that prosecutes juveniles, conducted a “social media inquiry” on a person of interest. These would be the typical ways a SMART search would be communicated in paperwork — not on an FIS form.

Neither Thaxter nor Greco got the chance to ask more questions about facial recognition in these two cases, because both cases were dismissed. Thaxter noted that because of the state’s current strict discovery laws, where the prosecution doesn’t have to provide evidence to the defense until close to the trial, roughly 90% of his cases never reach the discovery phase. However, on Jan. 1, 2020, a new law went into effect in New York state that will force the police and prosecutors to turn over evidence much earlier in cases.

Thaxter expects that his office will be seeing a lot more cases where they’ll learn how facial recognition was used to investigate their clients.

These revelations are especially alarming in the context of recent reports that rogue NYPD officers are using Clearview AI, a highly controversial facial recognition software, on their phones. The department has not sanctioned that activity and may punish the officers, but there is clearly a disinclination among at least some police officers to respect basic privacy rights and bend or break safeguards around the use of facial recognition software.

The NYPD has long resisted calls for more transparency around its facial recognition program. And as cities around the country are having a debate over police use of facial recognition — with cities like San Francisco, Oakland and the Boston suburb of Somerville, Massachusetts, outright banning it — New York City and the NYPD have fought to keep information about its facial recognition program secret.

Georgetown’s Center on Privacy and Technology has for years tried to get the complete picture of the NYPD’s facial recognition program, and filed a lawsuit over it. As a result, in late 2018, the NYPD was forced to turn over about 3,700 pages on its program.

HuffPost asked Clare Garvie — one of the legal researchers leading Georgetown’s investigation into the NYPD’s use of facial recognition — about the documents in question in the two cases from Legal Aid and Bronx Defenders. After reviewing the records, she said, “The question becomes, why is the Facial Information Unit search returning a social media photo when, as far as we’re aware, the database is made up of mugshots and pistol identification photos?”

The public might also learn more about exactly what information is being fed into FIS if a new law being considered by the New York City Council titled the Public Oversight of Surveillance Technology Act passes, which would mandate that the city and the police department tell the public more about its policies and practices with facial recognition technology. More than half of the New York City Council supports the POST Act. As part of that bill, advocates have pushed for lawmakers to force the department to disclose all the ways that it is monitoring social media.

The NYPD has been extremely combative in response to these proposed reforms; the department has called the POST Act “insane.” At the first city council hearing on the proposed law, the police department continued to lobby against it.

Still, the scrutiny on the NYPD over how useful these tools are persists. Earlier this month, New York State Senator Brad Hoylman (D) introduced a bill that would outright ban the use of facial recognition and other biometric tools by police in the state. In the bill, Hoylman cites several recent troubling reports on the NYPD’s use of facial recognition, including the presence of juvenile photos in the database.

Public defenders like Thaxter believe that the city needs to consider whether the police should have access to it at all.

“Facial recognition is military-grade technology that has proven to be better at political and social suppression than ensuring public safety,” he said. “Do we want to give this technology to a domestic police force like the NYPD or do we want to put it into the category of other military weapons like rocket launchers that would cause more harm than good and be used disproportionately our poor and indigent populations?”