When Reddit banned the 40,000-member group r/incels last year, updating its terms of service to prohibit content that “encourages, glorifies, incites or calls for violence,” the company was widely praised for cracking down on the poisonous misogyny that lived on its platform.

Soon after r/incels came down, the subreddit r/braincels flared up with posts bragging about sexually assaulting women and gleeful discussions about hurting or killing women. Reddit, however, doesn’t consider r/braincels to be in violation of company policy, even though messages on the page appear to encourage, glorify, incite and call for violence.

“At this time, r/braincels is not in violation of our policy,” a Reddit spokesperson told HuffPost.

The question of what companies such as Reddit, Facebook and Twitter allow on their platforms was reignited this year when a man drove a van into a crowd of pedestrians in Toronto, killing eight women and two men. It’s the latest massacre by a man seemingly radicalized in one of the many online communities where violence against women is routinely celebrated. Extremist experts say it won’t be the last.

“It goes beyond simple misogyny when men start to rally around those that are committing terrorist attacks, or they start dehumanizing women to a level that is a precursor to violence,” said Ludovica Di Giorgi, an expert in far-right extremism and jihadism online.

The suspect in the Toronto attack, 25-year-old Alek Minassian, posted a note online shortly before the rampage, declaring “The Incel Rebellion has already begun!” His Facebook post cast incels ― men who identify as involuntarily celibate ― and their major networks, such as r/braincels and the website Incels.me, into the media spotlight. Minassian also praised Elliot Rodger, a California man who killed himself and six others in 2014 after encouraging members of an online forum to “start envisioning a world where WOMEN FEAR YOU.”

Rodger has become a figure of worship in online groups for incels and a source of inspiration for other mass shooters. Christopher Harper-Mercer, a 26-year-old student in Oregon, gunned down nine people in 2015 after lamenting his virginity and reportedly posting a message online wishing readers “an enjoyable Elliot Rodger day.” Nikolas Cruz, the teenager accused of fatally shooting 17 people at a Florida high school in February, also praised Rodger online.

Platform companies are doing little to police the communities where rape and other kinds of violence against women are encouraged and glorified, and where there are repeated calls for violence in the form of “incel uprisings.” (This article includes unedited quotes from some of these communities, which include endorsement of rape and racial slurs that readers may find disturbing.)

Di Giorgi and others who track extremist groups have observed that violent misogynist speech online appears to feed off the growth of the extreme alt-right and white supremacy movements.

“In recent years with the growth of internet communities, it’s kind of starting to overlap so much with other types of extremism,” said Di Giorgi. “People who wouldn’t have necessarily been exposed to these sort of beliefs offline are now being radicalized because of the communities they engage with online.”

Mainstreaming Misogyny

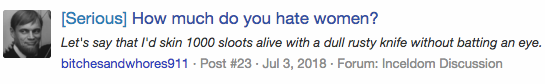

After r/incels shut down on Nov. 7, 2017, many incels migrated to the subreddit r/braincels as well as Incels.me, which was created two days later. Since then, Incels.me has hosted more than 1 million messages, including posts containing advice on how to get away with rape and stories of stalking and groping women. In response to a recent thread titled “Whores Deserve Rape,” one member ― whose profile, like many others, displays a picture of Rodger ― wrote: “Women enjoy rape ... I think women rather deserve pain, and miserable death.”

Such sentiments are not exclusive to fringe incel networks. Twitter, which has frequently been criticized for not doing enough to combat hate speech on its platform, has seemingly done little to curb brazen discussions about violence against women.

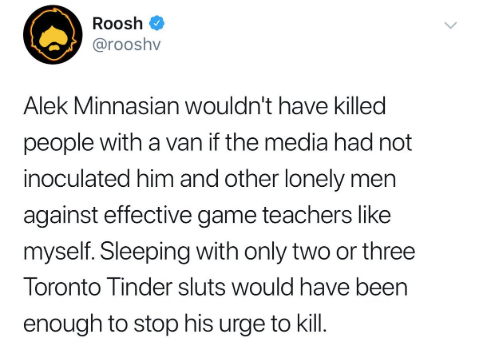

Daryush “Roosh” Valizadeh, a notorious “neo-masculinist” blogger who has more than 38,000 followers on his verified Twitter account, recently suggested that so-called “game teachers” like himself could have prevented the massacre in Toronto by helping the alleged killer have sex with “Tinder sluts.” He also implied women are responsible for incel murders.

“If you don’t want innocent people to be victims of incel rage, you must sleep with nice guys,” Valizadeh told a woman on Twitter in May. In June, he added: “The growing incel epidemic, which is caused by allowing females choice in who to have pre-marital sex with ... will cause more mental suffering for men than any modern woman has to experience.”

In his blog and self-published book series, “Bang,” where he coaches men on subjects such as how to avoid rape charges, Valizadeh boasts of sleeping with numerous women who were either barely conscious, too drunk to consent to sex, or who repeatedly refused his advances (“No means no — until it means yes,” according to Valizadeh, who denies he’s a rapist). He also tweets photos of seemingly random women with the caption “Would you bang?” These tweets draw in sexist, crude and sometimes violent responses, and seem immune to Twitter’s reporting system for abusive content.

Australian politicians debated banning Valizadeh from their country after he advocated for legalizing rape on private property, which he called “learning experiences for the poorly trained woman.” But he can still roam freely on Twitter.

When asked how Valizadeh could still have a verified account, Twitter declined to comment.

Facebook, no stranger to criticism over hateful and violent speech on its platform, repeatedly declined to take down a group called “If she puts you in the friend zone, you put her in the RAPE zone!” After initial claims that the group didn’t violate company policy, Facebook quietly removed it in April.

Facebook declined to answer how many reports it had received before taking the group down, and would not say how long it was active. A spokesperson said the company is “investigating why it wasn’t removed sooner.”

Violent misogynist rhetoric is also rampant in Kekistan, a fictional internet country that is home to countless right-wing trolls and white nationalists. Kekistan has proliferated across Facebook and Reddit in massive groups where members frequently engage in cyber-harassment and so-called “shitposting,” often targeting women. Kekistan has direct ties to the incel movement: Its self-declared president Gordan Hurd, known online as “Big Man Tyrone,” recently filmed a promotional video for incel.co, a lewd, now-defunct incel website which he refers to on-camera as “the holy land.”

Free Speech vs. Hate Speech

Misogyny on major platforms often “gets a pass” in ways that other bigoted and hateful content does not, according to Keegan Hankes, a senior research analyst at the Southern Poverty Law Center, which monitors hate groups and recently added “male supremacy” to the list of extremist ideologies it tracks.

“This happens because a lot of misogyny tends to fall under this umbrella of being politically incorrect, so for some reason, it gets less enforcement,” Hankes said. “That’s a really important part of this whole conversation about how these extreme online ecosystems exist and what can happen when they’re left unchecked.”

At least 56 percent of American men believe that it is more important for people to be able to speak their minds freely than for people to feel safe online, according to a recent Pew Research Center study. Only 36 percent of American women, who are far more likely to experience gender-based harassment online, agree with this view.

“A lot of misogyny tends to fall under this umbrella of being politically incorrect, so for some reason, it gets less enforcement.”

- Keegan Hankes, senior research analyst at the Southern Poverty Law Center

Platform companies don’t have a legal responsibility to police or regulate content in the United States. The Communications Decency Act shields them from liability for most user-generated content, leaving them free to decide what kind of content they will allow on their sites. But drawing a line between free speech and hate speech has proven to be an evolving struggle.

In 2012, a Twitter executive described the company as the “free speech wing of the free speech party.” But as abuse and harassment have become synonymous with open platforms, companies such as Facebook, Twitter and Google have been forced to do more to combat the hate speech they host and amplify.

Some experts say the free speech argument is a fallacy and argue that those companies should be held to account for the content hosted on their platforms.

“When it’s a private company and not the government that’s dictating speech, it’s not a First Amendment issue. Those companies can censor as much as they want,” Carrie Goldberg, a Brooklyn-based victims’ rights attorney, told HuffPost. “We have to start banding together and demanding that our platforms take action and that law enforcement take action as well.”

Who Polices The Internet?

In the U.S., law enforcement agencies don’t always have the authority or bandwidth to monitor hate groups for threats of violence and other crimes.

“The FBI cannot initiate an investigation based solely on one’s membership of a group or their exercise of First Amendment rights,” a spokesperson for the agency said in a statement.

Many countries in Europe, however, prohibit hate speech and, in recent years, the European Union has threatened platform companies with punitive legislation unless they do more to police hate speech and extremist content. As a result, Facebook, Twitter and Google-owned YouTube have adjusted their policies to shorten their response time to reports from users, among other things.

“When it’s a private company and not the government that’s dictating speech, it’s not a First Amendment issue.”

- Carrie Goldberg, victims’ rights attorney

Under pressure from the EU, internet giants also began a collaborative initiative last year to fight online extremism and terrorist propaganda. But so far, little has been done to combat radical misogyny.

“Major platforms’ latest efforts have mostly been focused on what are conceived to be traditional forms of violent extremism and terrorism. But there is no comprehensive policy in place for addressing other forms of online extremism, such as radical misogyny,” said Di Giorgi. “That’s definitely a problem.”

Di Giorgi works for Moonshot CVE, a Google-backed startup that works with internet companies to counter violent extremism and online radicalization. One initiative known as the Redirect Method reaches potential ISIS recruits by tracking keywords and searches for phrases like “how to join ISIS” in English and Arabic. It then redirects searches to anti-ISIS videos ― a method Di Giorgi believes could be used to counter other kinds of extremism online, including radical misogyny.

Playing Whack-A-Mole

After Reddit shut down several hate-filled pages in 2015, a subsequent study analyzed whether the removals actually diminished hate speech on the site or simply moved it elsewhere. Researchers concluded that many members of the banned groups left Reddit and that those who stayed lessened their hate speech usage significantly.

But in other cases, trying to crack down on these hives of radicalization can seem like an endless game of whack-a-mole ― as one website comes down, two more pop up.

PUAHate.com, a forum where Rodger found a sympathetic audience for his violent misogynist rants, came down after his deadly rampage. Three days after the killings, however, SlutHate.com was born. Posts such as “Rape a nigger bitch” and “If a slut blacks out drunk, she deserves to get raped” are common on the site.

Similarly, just one day after domain registrar Namecheap removed a network called Incel.life, Incelocalypse.today sprang to life, hosting much of the same hate-filled content, including a lengthy post titled “Even if you could get pussy from a willing female, you should still want to rape girls.” The site went offline in late May, reappeared with a different domain host days later, then recently went dark again. A new site filled the void within days.

As HuffPost reported in May, Incelocalypse.today was run by congressional candidate Nathan Larson, a pro-rape pedophile who believes women should be classified as “property, initially of their fathers and later of their husbands.”

Experts say that although these groups are resilient, pushing their content off popular platforms and onto lesser-known websites has value.

“The rhetoric in the incel and male supremacy communities is some of the most violent and vile that you’ll see,” said Hankes. “You can’t stop these communities from existing ― they’re going to find places to congregate online [as] there will always be dark corners of the internet ― but it’s very important to make sure it’s harder to find them.”