As the general election season gets underway, the flood of polls is likely to keep coming in -- which means the debate will rage on regarding which polls are credible, which methods are best and why.

When you take a closer look at the way various polls are conducted, there's no one method that stands out as inarguably better than all the rest. Some people in the polling industry like to claim that polls done by live interviewers using randomly selected phone numbers are more accurate than surveys that use automated telephone technology or surveys conducted over the Internet. But research shows that’s not necessarily true.

Background

The polling field has struggled with changes in technology that have rendered random digit dial telephone interviewing -- in other words, randomly calling phone numbers and asking people to take your poll -- less reliable than it used to be. Random digit dialing was once considered the “gold standard” of polling methods, since it theoretically ensured you'd reach a cross-section of people that was representative of the whole population.

The gold standard is no more, however. Some of the issues complicating the phone-polling process these days include declining response rates, people's growing reliance on mobile phones and Federal Communications Commission rules that ban automated calling technology in many cases.

Internet polling has emerged as an alternative. But there’s no way to randomly select people from the Internet, which means Web polls rely on “panels” of people who have been recruited and who signed up to take surveys online. People who volunteer to participate in polls aren't necessarily representative of all Americans. That means the samples in these studies have to be carefully selected, weighted and adjusted to match the overall population and account for any possible bias. (Of course, the vast majority of telephone polls are also weighted to match the population.) And many people who volunteer to participate in Web surveys end up not following through on their commitment.

The research

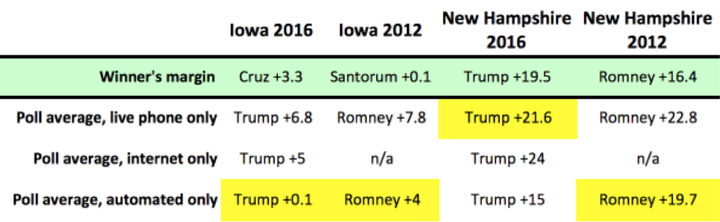

For the recent American Association for Public Opinion Research conference, the HuffPost Pollster team did an analysis of how accurate the polls were in the Iowa caucus and the New Hampshire primary elections in 2016, compared to those in 2012. (The comparison was restricted to the Republican races in Iowa and New Hampshire, since there was no Democratic primary in 2012.) Instead of looking at the accuracy of individual polls, we looked at the accuracy of the polling averages of different types of polls.

We looked at the three most common polling methods: live telephone, Internet and automated phone (also known as “interactive voice recording,” or IVR). Automated technology involves a recorded voice reading questions to the respondents, and respondents recording their answers by pressing buttons on their phones. Due to FCC regulations, these types of polls can’t include mobile phones. The automated category includes polls that were mostly automated but that used some Internet sampling to supplement their landline calls.

The various types of polling offered very different looks at the race. Live telephone polls in Iowa this year indicated that Donald Trump was up by nearly 7 points in the GOP contest. Automated polls, on the other hand, seemed to show that the race was tied. In the end, Sen. Ted Cruz (R-Texas) won the GOP's Iowa caucus by 3 points.

In 2012, automated polling did well in Iowa and New Hampshire, predicting the outcomes of those contests slightly better than live telephone polls did. (There weren’t any Internet polls in those states that year.) But in 2016, live telephone polls did the best job of predicting the outcome in New Hampshire's Republican primary. Internet polls overestimated Trump’s advantage in the Granite State, while automated polls underestimated the business mogul.

Another presentation at the conference looked at the accuracy of individual polls in presidential, gubernatorial and Senate races from 2008 to 2014. That analysis turned up similar results. Jennifer Dineen of the University of Connecticut and Andrew Smith and Zachary Azem of the University of New Hampshire found that not only did the accuracy of different types of polls vary by year, it also varied according to election type. In 2014, for example, online polls were the most accurate at predicting gubernatorial races, but live telephone polls were more accurate in predicting Senate races.

What it means

The conclusion seems to be that we can’t really predict which type of polling method will be most reliable in pre-election surveys. Random digit dialing, the onetime “gold standard,” doesn’t seem to be any more consistently accurate than other approaches.

One thing this research doesn't get into is how, exactly, the various polls went about identifying likely voters -- which could be an important point. Many pollsters don’t disclose the specifics of how they select likely voters, but thanks to a recent Pew report, we know that kind of thing matters.

Maybe we should be focusing more on which polls are conceived and conducted with appropriate care and rigor, rather than which ones are done by phone and which online. Quality matters, as Nate Silver demonstrates in his own pollster ratings -- which depend, in large part, on a pollster’s long-term accuracy. The more meticulous pollsters are the ones who rise to the top, regardless of how they collect the data.

Janie Velencia and Ariel Edwards-Levy contributed reporting.