Welcome to the second Precursor To Dystopia column.

What do old men in adult diapers, rape, and the breaking news cycle have in common? Well, aside from each being gross in their own regards, they’re all in some way manipulated and generated by rogue algorithms for your consumption:

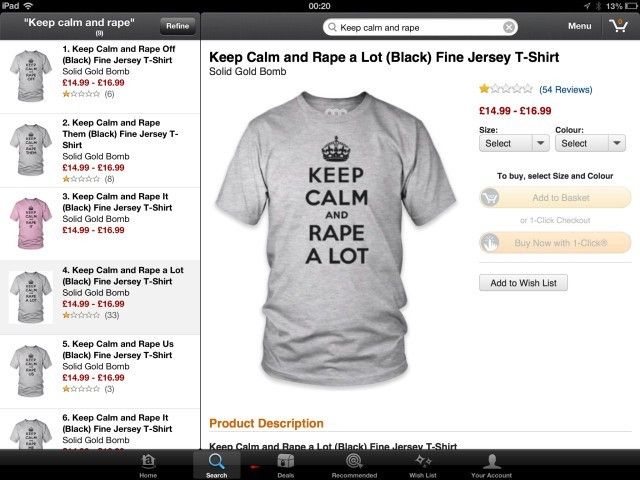

This is a real product. You can really buy it, and over 100,000 others like it, all procedurally generated and posted for sale by a robot. Like this one:

And this:

But it’s not just crazy iphone covers. It’s also news, social media, YouTube, and just about every other aspect of your daily life that you ingest through a screen. There’s no doubt: robots have infiltrated, proliferated, and taken over our social media platforms.

So far, text-only bots have managed to add massive amounts of noise to the signal of real news disseminated throughout our social platforms, and the results of just these little text bots has been to see a) the weakening of the information chain, b) the proliferation of fake, completely made up “news” rising within certain subcultures within the far left and far right, and c) the overall decline in usability and increase in frustration in simply using these platforms.

So what happens when the bots — completely capable of generating headlines for thinkpieces on their own to manipulate political elections, news cycles, and others — collude with the sweeping trend of algorhythmic voice and video synthesis?

Pure fucking chaos, is what. We no longer know what is real and what is fake anymore. But worse... Our fatigue is growing to the point that we no longer even care.

Note: there’s “we” as in “We the people,” and then there’s WE. Us, the folks who have been on the internet for years and seen all that it has ever been. WE can tell, with reasonable success, when something is a real headline and when it links to garbage. WE can tell when something is journalism and when it’s just opinions wrapped in loose connections to tiny nuggets of fact. WE know bullshit from shinola. And even WE are unable to find the real in all of this emerging fakeness.

I want to say it’s not WE that I worry about, but I am. It’s not the moms and the dads who joined Facebook to see what the kids are up to that I am most scared for, even though they’re the ones who, despite telling us 20 years ago that you can’t believe anything on the internet, quote FreedomEagleNews.Info on every single thinkpiece its Russian agents assisted by robots generate. They have always fallen for anything, and their taking fake news that feeds into their confirmation biases to the polls with them is nothing new. Never in the history of cognitive bias has real information sways an entrenched viewpoint — they “believe” this fake crap only because they “believe” their viewpoint so deeply. Facts are meaningless, and that will always be the case (See: all religions ever.)

And when you consolidate your sources for information to one or two platforms which can be easily manipulated and/or bought by bad people to mess with you... Well, you have a pretty solid recipe for dystopia.

Politicians, governments, bad actors, and hucksters using this uptick in noise to smother the signals we really need to determine the course of our future. In other words: when a truth comes out that should have a major consequence, there is a risk — if not an inevitability — that that truth will be fuzzed and made either quieter or altogether irrelevant by massive swaths of fake, automated noise that looks REAL ENOUGH for the typical person to just accept as part of the cost of doing business in society.

- “Everything’s corrupt, so why bother.”

- “Your side has bad stuff too, see (any number of fake news articles posted and disseminated as “truth” within the verticals of political extremism)”

- “The media lies to you, I only trust my sources.”

And so on.

When you combine this very normal, very human element with emerging technologies, things get downright scary. This week, NVidia released a video of hundreds of unreal yet totally believable fake celebrity faces, all generated by AI:

NONE OF THESE PEOPLE ACTUALLY EXIST. And you have to admit, you can believe each and every one is some celebrity you may or may not have heard of.

You may remember another video that popped up last year of an AI generating a video of a very real Obama giving a very not-real speech by sampling, cutting, and matching the video of his mouth from sample speeches and pairing it with the linguistic patterns of other speeches he’s given.

What happens when NVidia’s AI face generator, the Obama lip synch tech, and something like lyrebird are all used to create very real looking, very real sounding, but very not real “Democratic Senators” or “Republican Congresspersons” saying completely off the wall shit that no one will verify, fact check, or even challenge because hey, it looks real, and bad shit happens every single minute of every single day, and I’m just too tired to care?

And what does any of this have to do with old men in adult diapers on phone cases, or shirts that promote keeping calm and raping a lot? Everything, if you’re looking at the tech that brought those things to life.

Yep, they made this.

And here’s this one again, and no I’m not sorry

There’s one very important fact you should know about the items pictured above: Neither of the companies who were selling these items were aware of their existences.

The company that churned out over 100,000 iphone cases with bizzare and often borderline gross images on them, all selected, built, and listed on Amazon by an AI, had no real clue what images they were putting on cases. They just used a script to pull popular search terms from stock photo libraries, create the image, and post it for sale. And the company who made the Keep Calm And Rape Alot shirt had no clue it was for sale in their shop. And what’s really challenging to accept is that these products actually sold in the hundreds of thousands of dollars. If you sell on Amazon, they competed for your dollar. In order to keep up, you have to compete with the robots.

James Bridle says in his amazing post on Medium titled "There Is Something Wrong With The Internet”:

This is content production in the age of algorithmic discovery — even if you’re a human, you have to end up impersonating the machine.

In order to make it as a content creator, you have to sound like a robot in your title, headline, copy and pitch, because the robots have gotten so good at creating high-ranking, highly-viewed, highly-suggested content. Nowhere is this more evident than on Youtube, where thousands of bots have posted literally millions of automatically generated videos on “Kids YouTube.” Which has been a godsend for the “parenting via digital device” set (which is most parents, near as I can tell). Many 100,000+ view videos are created 100% from the ground up by robots that scrape background music, images, and keyword-heavy trending phrases, mash them into a stream, and entertain toddlers. But they have diluted the platform so deeply that people can create alarmingly disturbing video content that arrives on the platform, unchecked and uncensored. People are sneaking violent, disturbing, and sexual content into videos exclusively marketed and shown to children. And this can only happen because the platform they’re hosted on is so dilute with garbage, it’s impossible for a human team to curate and monitor the content.

Which brings me back to Bridle’s great article. I highly recommend you read the entire piece, but the most important part — to me — is this:

This, I think, is my point: The system is complicit in the abuse. And right now, right here, YouTube and Google are complicit in that system. The architecture they have built to extract the maximum revenue from online video is being hacked by persons unknown to abuse children, perhaps not even deliberately, but at a massive scale. [...] This is a deeply dark time, in which the structures we have built to sustain ourselves are being used against us — all of us — in systematic and automated ways.

Now, maybe the emergence of these technologies and their effect on the purely digital stream that flows from your little black rectangle of dopamine generation feels too sci-fi for you (despite being real, right here, right now things). So how about a more immediate and traditional method of controlling what you think? Like, say, a billionaire with very widely known political leanings in a certain direction buying and then shuttering a channel for information? (Thank god for people like Paul Ford, who is doing his part to save all the content that was destroyed in this move, and for Archive.org...)

Or, shutting down a newly important voice in the resistance against the facist elements cropping up in today’s political and social sphere?

What if public, widely-used video delivery platforms host citizen recorded war crimes and bad behavior by police which could be (and is) used as evidence at trial suddenly decided to dump that content, eliminating the evidence?

Sure, it’s “just business” but these businesses are where we get OUR INFORMATION. This is the stuff we use to make decisions. And when it’s fake, or manipulated, or made unavailable, we aren’t able to make good decisions. Dystopia is not just about technology (although, in the context of this column, it plays a heavy part). It’s about control. And wether or not these decisions were malicious, it’s still controlling the flow of information.

But sometimes it’s not even the corporation’s call. There’s always a case where a rogue employee who does what they think needs doing and shuts down the President's Twitter account.

And it’s worth noting, at least one government believes so much in hacking the flow of information, they have dedicated massive amounts of money to the program (no need to click, I’ll give it away: it’s Russia. And the program is called Fancy Bear, which is just too awesome a name not to type out myself).

There is no doubt: while we wait for people to establish some sort of formal ethics in AI, we head further and further along the path to dystopia.

More Precursors To Dystopia for the week of November 1-7, 2017:

- Speaking of Russia, one hacking group posted a fake Facebook protest in Texas, and another posted a counter-protest the same day.

- There’s a group dedicated to 3D printing objects to fool neural network AIs from identifying them.

- And another who can 3D print your housekey from a photo.

- One of my favorite bloggers Janelle Shane trained her neural network to generate halloween costumes.

- It’s interesting that Osama Bin Laden had Charlie Bit My Finger saved on his laptop.

- Google invented the real-life Babelfish.

- And if you’re bored this week, try hacking DNA. It’s like GATTACA, but in the comfort of your own home.

That’s it. Have any comments or insights you’d like to share? Please comment, I’d like to hear them and discuss. Have a good week.

Missed last week’s Precursor to Dystopia? Check it out!

-------

Joe Peacock is a writer and producer of the Screenland cyberculture documentary series on RedBull.TV, and author of the Marlowe Kana cyberpunk novel series.