The public’s expectations, and fears, around AI are exploding. I was at a public debate in London last week and if I were to categorize the questions by the audience I would place them in three buckets. Bucket 1 was the worry – or hope - that AI will replace human workers, and put an end to capitalism. Bucket 2 was how to make AI “ethical” so it is safe to embed in our systems and life. Bucket 3 I would call the “whatever bucket”, to include free associations that people draw between irrelevant things due to ignorance or obsession with a certain subject. All buckets, worries and anxieties, are the result of overestimating what the technology is capable of, and underestimating the capacity of humans to adapt or human institutions to respond.

Mass media are making things worse by amplifying predictions about economic future dystopias, which are often based on moot assumptions and are interpreted out of context. For example, when economists predict that a certain percentage of jobs will disappear because of automation they do not necessarily predict that people will be left jobless forever. Predicting what will happen after the disappearance of jobs due to automation is very difficult, and arguably impossible. Neo-classical economics cannot predict the future; hence economics is often called the “dismal science”. As I have recently discussed only computational, non-equilibrium techniques can give us some clues about possible future scenarios.

State of Play for AI

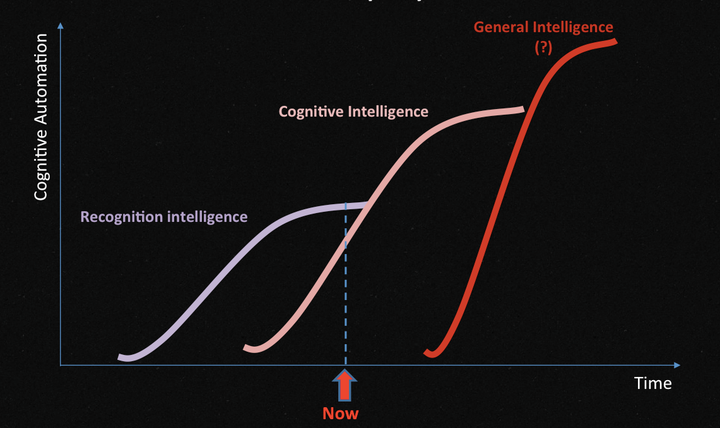

But let’s turn our attention to the present, and see where we really are with Artificial Intelligence, and what we can expect for the near future. In the graph I have tried to depict three waves of AI innovation, depending on what percentage of our cognitive abilities they could automate. The first wave of AI innovation I call “recognition artificial intelligence” (RAI): it is what machine learning does, particularly in image and speech recognition. It is impressive to have machines that can understand speech or identify objects and faces - and that is where all the hype around AI stems from. But that is all they can do. Traditional computing methods take over after that to deliver economic value.

The next wave of AI innovation is gradually emerging. I call it “cognitive artificial intelligence” (CAI): machines not only capable of inferring facts from data, but able to take ethically-bound autonomous decisions in real time too. A typical example is a driverless car. It does not suffice to recognize that a collection of pixels is a white van in front that is decelerating fast, but to also decide how to avoid collision with the minimal material damage or human cost. We are not yet there, and it may take us another ten years to develop fully autonomous cars. And even when we do, there’s a host of other technological challenges to solve for. Moore’s Law, that has been guiding expectations over the past decades, is slowing down.

Even if we manage to evolve artificial cognitive intelligence, it will still be many years more before we develop “general artificial intelligence” (GAI). It will be a great leap for systems that can solve for a narrow problem to be able to abstract their learning and apply it to whatever problem they may encounter. Although many philosophers argue that developing GAI is inevitable, that may not be come to be true. For instance, we have been trying to develop thermonuclear energy for decades now; we know how to do it; but we have not achieved it yet because of enormous technical difficulties. The distance between theory and practice is not always navigable. And our quest towards solving a particular problem may have to be abandoned because it is not worth pursuing anymore. In the case of thermonuclear technology, it may become irrelevant to build thermonuclear power stations, as renewables are likely to solve our energy problems anyway.

Likewise, having developed CAI (“Cognitive artificial intelligence”) to a high degree in the next ten years may prove sufficient. Why go further and build GAI (“general artificial intelligence”)? Public backlash against GAI may also halt further research and development. There are numerous examples from molecular biology where progress was stalled due to public reaction. Economics, as well as science and technology governance, will decide the future of AI; and not the executives, investors or entrepreneurs of Silicon Valley.