As anyone who routinely tunes in to watch Sunday Morning prognosticators sound forth on their big predictions about politics will tell you: it hurts to watch. So much. So very much. But they'll also tell you that being a political tout seems to be a pretty great gig: you show up, you say a bunch of stuff, and you never worry that you'll ever be held accountable for whatever you get wrong. That's why if you choose that path in life, you may as well be bold and make a bunch of insane predictions, because you're just as liable to accrue renown for being crazy as you are for being correct.

Nevertheless, from time to time, some intrepid souls take it upon themselves to study the augury and attempt to make a determination about who is right and who is wrong. And now, students at Hamilton College under the direction of public policy professor P. Gary Wyckoff have subjected 26 of your favorite pundits to rigorous analysis.

As a part of their research paper, titled "Are Talking Heads Blowing Hot Air," the Hamilton College students "sampled the predictions of 26 individuals who wrote columns in major newspapers and/or appeared on the three major Sunday television news shows (Face the Nation, Meet the Press, and This Week) over a 16 month period from September 2007 to December 2008." They used a scale of 1 to 5 (1 being “will not happen," 5 being “will absolutely happen”) to rate each prediction the pundits made, and then they evaluated each prediction for whether or not it came true.

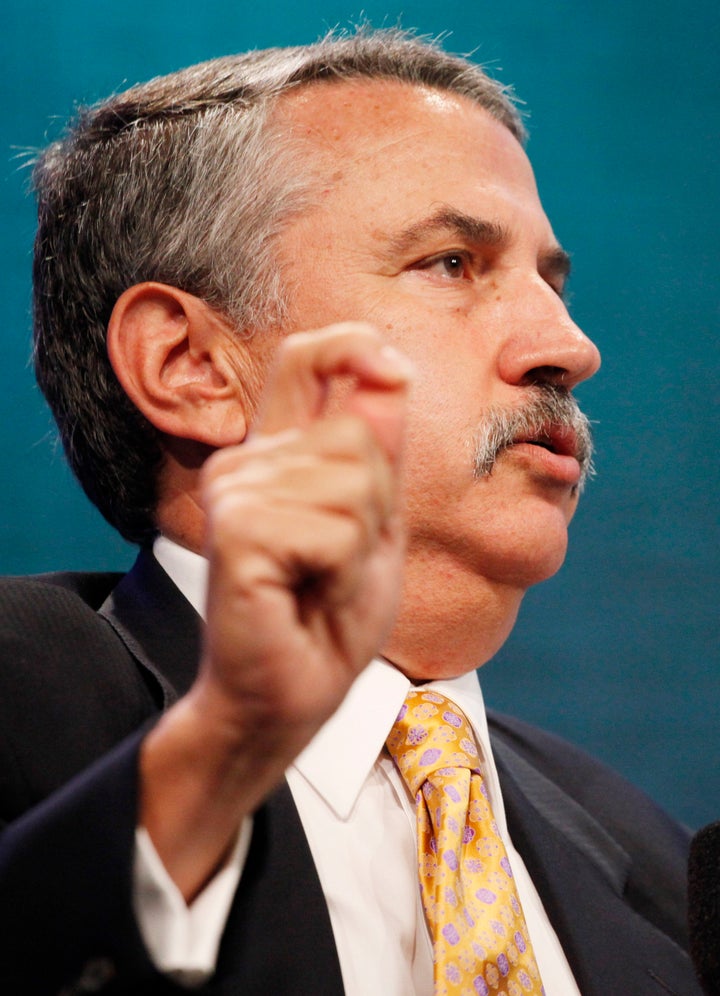

What did they find? Basically, if you want to be almost as accurate as the pundits they studied, all you have to do is a) root through the cushions of your couch, b) find a coin, and c) start flipping it. Boom! You are now pretty close to being a political genius. If you are just as gifted at torturing metaphors, you are now "Thomas Friedman." Only nine of the 26 pundits surveyed proved to be more reliable than a coin flip.

Using the students' statistical methodology, the 26 pundits were broken down into three categories: "The Good, The Bad, and The Ugly." Honestly, some of the results were pretty surprising. For instance: we kind of have to give some props to Maureen Dowd, who regularly salts her long essays of endlessly repeated playground insults and dated cultural references with some surprisingly accurate predictions. Here's how they break down:

THE GOOD: Paul Krugman, New York Times (highest scorer); Maureen Dowd, New York Times; Ed Rendell, former Pennsylvania Governor; Chuck Schumer, New York Senator; Nancy Pelosi, House Minority Leader; Kathleen Parker, Washington Post and TownHall.com; David Brooks, New York Times; Eugene Robinson, Washington Post; Hank Paulson, former Secretary of the Treasury

THE BAD: Howard Wolfson, counselor to NYC Mayor Michael Bloomberg; Mike Huckabee, former Arkansas Governor/Fox News host; Newt Gingrich, eternal Presidential candidate; John Kerry, Massachusetts Senator; Bob Herbert, New York Times; Andrea Mitchell, MSNBC; Thomas Friedman, New York Times, David Broder, Washington Post (deceased); Clarence Page, Chicago Tribune; Nicholas Kristof, New York Times; Hillary Clinton, U.S. Secretary of State

THE UGLY: George Will, Washington Post/This Week; Sam Donaldson, ABC News; Joe Lieberman, Connecticut Senator; Carl Levin, Michigan Senator; Lindsey Graham, South Carolina Senator; Cal Thomas, Chicago Tribune (lowest scorer)

In their executive summary, the students note:

We discovered that a few factors impacted a prediction's accuracy. The first is whether or not the prediction is a conditional; conditional predictions were more likely to not come true. The second was partisanship; liberals were more likely than conservatives to predict correctly. The final significant factor in a prediction's outcome was having a law degree; lawyers predicted incorrectly more often.

As for the factor of partisanship, it certainly didn't help pundits if their predictions were primarily based on who they happened to be carrying a torch for in the 2008 election -- Lieberman and Graham, obviously, did poorly in this regard. The students noted that "[p]artisanship had an impact on predictions even when removing political predictions about the Presidential, Vice Presidential, House, and Senate elections," but I still imagine that this particular script may have flipped if the period of study was the sixteen month period between September 2009 and December 2010.

Naturally, no one should be surprised that getting a law degree is just a big mistake in life. Anyway, go read the whole study.

RELATED:

Are Talking Heads Blowing Hot Air? [Executive Summary]

Are Talking Heads Blowing Hot Air? (PDF) [Full Study]

[Would you like to follow me on Twitter? Because why not? Also, please send tips to tv@huffingtonpost.com -- learn more about our media monitoring project here.]