This year's campaign may be the most-polled in history. We’re getting more clues as to why some online polls are more accurate than others. And we've rounded up a sampling of what we learned from the American Association for Public Opinion Research conference this year. This is HuffPollster for Monday, May 16, 2016.

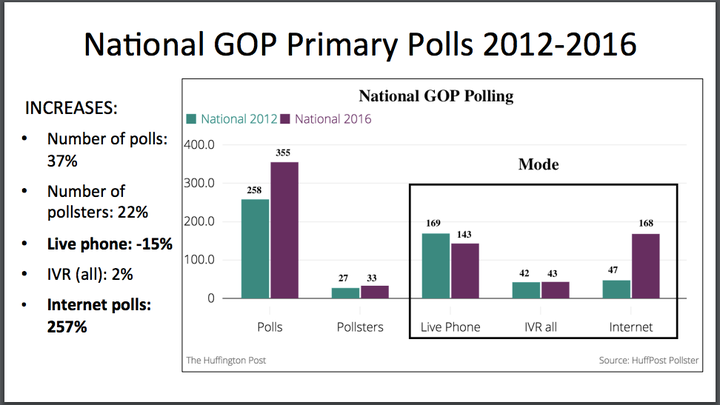

THERE WERE A LOT OF PRIMARY POLLS THIS YEAR - If it seems like there were a lot more primary polls this year than in the past, there were, as we showed in a presentation Saturday at the annual American Association for Public Opinion Research (AAPOR) conference.. As of April 30, there were 355 publicly-released national GOP primary polls in the HuffPost Pollster database, an increase of 37 percent over the 2012 national GOP primary poll total in the same time period. (We can’t compare Democratic primary polling to 2012 since there wasn’t a contested primary.) The increase isn’t because the 2016 race went on longer -- Mitt Romney was battling for the nomination through April of 2012.

Biggest increases in internet polling - Not only were there more polls in 2016 than in 2012, but there were also differences in how the polls were conducted. Only 47 of the 2012 national GOP primary polls were done online, but in 2016, nearly half of the polls were conducted over the internet. Telephone polls using live interviewers decreased a bit in the face of increasing costs. “IVR” or automated polls, which use interactive voice recording technology, sometimes in combination with other modes, stayed steady in 2016.

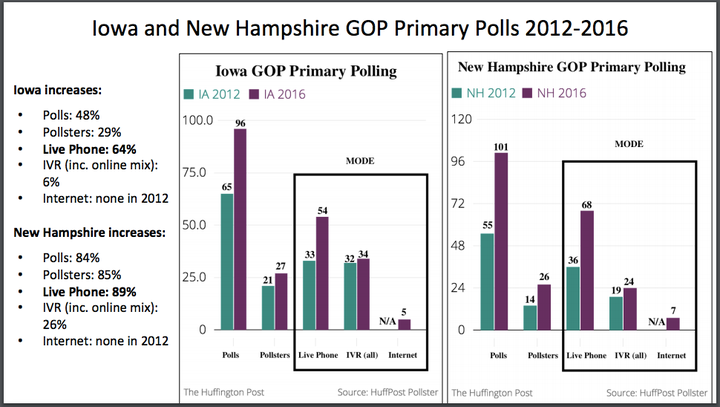

Polling in New Hampshire and Iowa had a slightly different makeup - Polling also increased in the individual states relative to 2012, although, as in 2012, some states were polled far more than others. New Hampshire and Iowa, the most heavily-polled contests by far, both saw considerable increases in the number of polls. In those states, however, the biggest increases were in live interviewer telephone polls. There were no Internet polls in these states in 2012, and 2016 saw just a handful. One reason: it's more difficult more online polls, which often rely on panels recruited to take surveys, to get large samples in small states like Iowa and New Hampshire than it is nationally. That's particularly true when the target is the relatively few people likely to vote in a low-turnout caucus or primary. [HuffPollster’s full presentation]

MORE ON AAPOR:

-Steven Shepard highlights five key takeaways from AAPOR presentations. [Politico]

-Stephanie Slade reports on the effects of bad question wording, how 2014 police killings have changed the way Americans view race and reasons why the US is not an oligarchy. [Reason, also here, and here]

-Annie Petit shares notes from sessions on the future of survey research, questionnaire design, online data quality and more. [LoveStats]

THE TOP POLLSTER IN A RECENT EXPERIMENT IS REVEALED - Douglas Rivers: "Last week, the Pew Research Center released a report "Evaluating Online Nonprobability Surveys," co-authored by Courtney Kennedy and six of her Pew colleagues. The study was released a few weeks before the annual American Association for Public Opinion Research (AAPOR) conference and attracted quite a bit of interest in the survey community….The study conducted ten parallel surveys--nine conducted on opt-in web panels and one on Pew's own American Trends Panels (ATP). ATP is a so-called 'probability panel' recruited off of Pew's telephone random-digit dial (RDD) telephone surveys….The headline results of the study, as summarized by Pew, were that 'Vendor choice matters; widespread errors found in estimates based on blacks and Hispanics.' In particular, one of the samples--labeled 'Vendor I' in the report--'consistently outperformed the others including the probability-based ATP.' This led to quite a bit of speculation about who was 'Vendor I' and how they managed to do better...To eliminate the suspense, Pew notified YouGov that we were 'Vendor I,' which was gratifying." [YouGov] (Disclaimer: The Huffington Post has an ongoing partnership with YouGov.)

What makes YouGov different from other pollsters - More from Doug Rivers: “[T]he main finding--that balancing samples on a few demographics does not remove selection bias in either probability-based or non-probability samples--is not new… and the concerns about sampling on variables other than demographics are overblown….There is nothing sacrosanct about demographics. These are convenient variables to weight by since there are official statistics (sometimes misleadingly referred to as "the Census") available on many demographics. However, you can weight on anything, so long as its distribution is known....We also have election returns (which tell us how many people voted Democratic or Republican) and exit polls (with about response rates around 50%) with detail on attitudes and voting for different types of voters. There are other relatively high response rate surveys, such as the American National Election Survey (ANES) and General Social Survey (GSS) which ask about religion, party identification, political ideology, interest in politics, and other attitudinal variables. While it has been traditional to limit survey weighting to a few demographics, non-demographics can be used if there are reliable benchmarks for these variables. Not only is weighting by non-demographics not a mistake, it is a necessity for most surveys today.” [YouGov]

HUFFPOLLSTER VIA EMAIL! - You can receive this daily update every weekday morning via email! Just click here, enter your email address, and click "sign up." That's all there is to it (and you can unsubscribe anytime).

MONDAY'S 'OUTLIERS' - Links to the best of news at the intersection of polling, politics and political data:

-A survey of top U.S. business leaders shows they don't think Donald Trump has a shot at the presidency. [CNBC]

-Harry Enten reminds us that it’s too early to look at Electoral College math. [538]

-Kathy Frankovic finds that Donald Trump has made significant gains among Republicans, but not with other voters. [YouGov]

-Philip Bump charts the leaders in past presidential elections during this stage of the campaign. [WashPost]

-Daniel Cox argues that other liberal social issues will likely fail to replicate the success of gay marriage. [538]

-Pollsters warn that surveys on the British referendum to stay or exit the EU could be wrong. [Business Insider]

-Jessica Holzberg analyzes whether "bring your own device" is acceptable in survey research. [Census Bureau]

-Public Policy Polling (D) is among the plaintiffs suing Attorney General Loretta Lynch over limits on robocalling. [Triangle Business Journal]