Nate Silver’s model gives this map (or worse for Clinton) a relatively high chance of occurring.

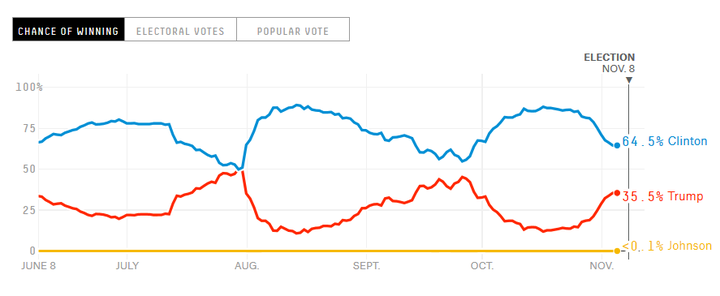

While I love following the prediction markets for this year’s election, the most popular and widely quoted website out there, fivethirtyeight.com, has something tragically wrong with its presidential prediction model. With the same information, 538 is currently predicting a 65 percent chance of a Clinton victory, while HuffPost’s Natalie Jackson and Adam Hooper are projecting a 98 percent chance,[1] and Sam Wang at Princeton Electoral Consortium is predicting a >99 percent chance.[2] What gives?

538 has been all over the map this election. Their model fluctuates, often irrationally, with each new poll that comes in. Underlying this is an overly complex and opaque set of assumptions that are probably too smart for their own good. If you go to the updates page on the 538 website,[3] you can see how each new poll or set of polls moves their probabilities. It first struck me when a poll on October 27th, showing Trump up by 19 points in Idaho,[4] somehow moved the prediction from Clinton with a 84.4% chance to win the presidency down to a 84.2% chance. I know that’s not a big movement, but why is a poll in Idaho that basically confirms the results moving the national race at all?

If you scroll through the updates, you’ll see countless other similar inexplicable movements. On Friday, Roanoke College came out with a poll that had Clinton up by 18 points in Virginia. Guess how that moved the probability of a national Clinton victory? That’s right, down four-tenths of a point, from 64.3 percent to 63.9 percent. Huh?

All of this would be curious, but acceptable, if it weren’t for the output from the model. 538 has done a ton of empirical work to see what factors should influence the probabilities. I am not questioning any specific assumption that 538 makes, from their state-by-state correlations to their use of a t-distribution to create “fat tails” in their probability distributions (i.e., higher likelihood of otherwise obscure events). What I am questioning is 538’s professional competence and responsibility in reality checking the output of their model.

In my more than 20 years of building and managing financial and statistical models first as an investment banker and for the past 15 years as an economic consultant, the mantra for model building has always been “garbage in, garbage out.” You can have the most intricate set of assumptions, but if your model is spitting out unrealistic results, there’s something wrong with your model.

538’s probability of a Clinton or Trump victory relies on the results of at least 20,000 model runs each time a new poll (or set of polls) comes in.[5] To get their probabilities of victory for each candidate, they sum up the number of times that candidate got 270 or more electoral votes on each run, much like Jackson and Hooper at HuffPost or Sam Wang at PEC.

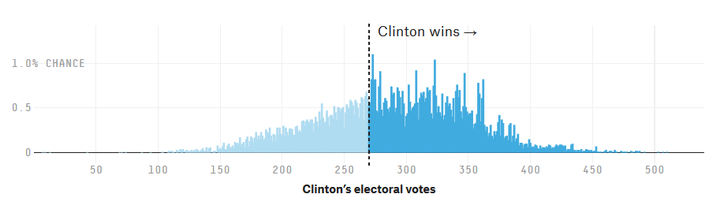

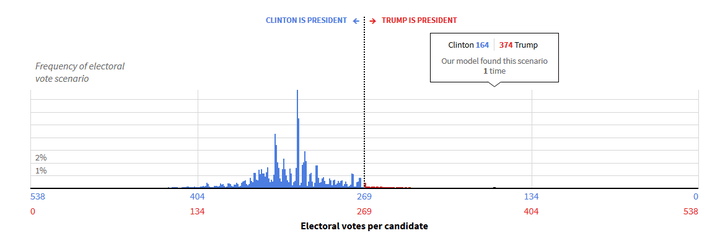

However, when you look at 538’s output, it is very curious. In the histogram below from their website,[6] you’ll see the probability that Clinton will get any specific number of EVs. The highest bar, the mode, gives her about a 1.1 percent chance of getting 271 EVs. They also report the median, which on Saturday showed Clinton with 290.6 EVs.

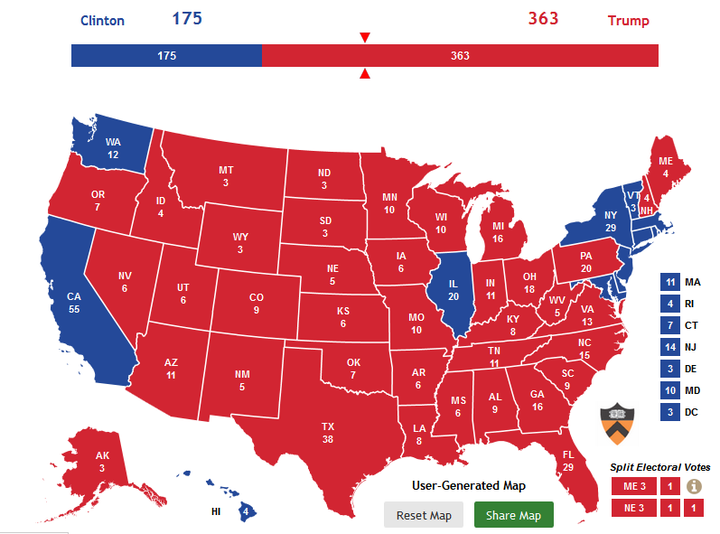

But a deeper examination of this distribution makes you wonder what’s in their model. The histogram above shows more than a 7 percent chance that Clinton gets fewer than 200 EVs.[7] There is not a remotely plausible map that has Clinton with less than 200 EVs, let alone less than 150 EVs, which occur in at least one percent of 538’s model runs! To get a map where Clinton gets only 175 EVs, we have to assume she loses not just all of the swing states, including the entire upper Midwest, VA, PA, FL, NH, and NC just to get her to 190. You’d still need to take Maine, NM and Oregon off the board to get her down to 175:

Is this a map that looks remotely plausible? Would you take a bet giving 40:1 against this map (or worse for Clinton) occurring?

On the other side of the histogram, is there really a greater than 3 percent chance that Clinton gets over 400 EVs at this point in the race? The only possibility there is if she wins all remotely marginal states, and adds to it Georgia, South Carolina, Arkansas and Texas to get over 400. Is there really any chance of that happening at all, let alone more than 3 percent of the time?

This is all to say that something, perhaps many things, in 538’s model have some serious, if not fatal flaws. Perhaps Nate Silver can say that he’s gotten all of these improbable outcomes because of his use of the t-distribution rather than a normal distribution to accentuate the tails of the model. But why? Why would you use a t-distribution to fatten your tails unless you lacked confidence in your model or your output?

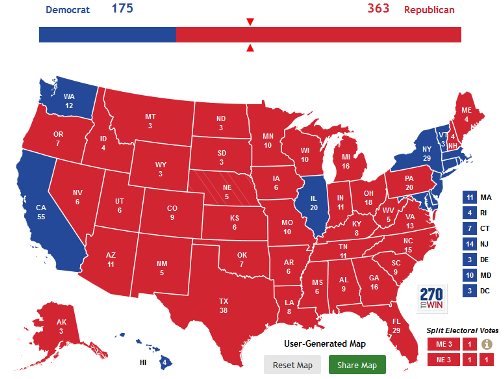

When we compare the 538 histogram with other polling aggregators, we see the other end of the spectrum. Sam Wang’s model shows a >99 percent chance of a Clinton victory. Wang uses pretty much the same polling data, but his histogram shows a more plausible range of 240-370 EVs for Clinton:[8]

The outcomes in blue are the ones that occur 95 percent of the time. This shows that in Wang’s model runs, Clinton gets between 265 and 340 EVs almost all of the time, and never has a scenario where she gets fewer than 240 EVs or more than 380. This passes the smell test, since whatever his assumptions, the model produces plausible output.

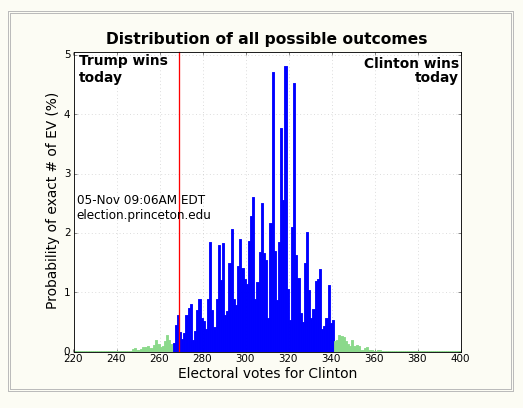

The Huffington Post’s forecast lines up well with Wang’s model. On HuffPost’s histogram, you can actually view the number of times in the simulation each particular electoral vote outcome occurred:[9]

In 10 million simulations, Clinton getting below 200 EVs occurred 151 times, none below 164 EVs. Of course HuffPost’s .00151 percent chance of Clinton getting less than 200 EVs is driven by the assumptions underlying the model, but when you do a reality check of PEC’s or HuffPost’s predictions, these numbers, too, pass the smell test. Sure I could put together an electoral map that has Clinton getting 164 EVs, but I wouldn’t bet on it actually happening for less than the 10 million to 1 payoff HuffPost generated.

Meanwhile, Silver’s model is causing mass hysteria[10] as it’s the most widely followed presidential poll aggregator, and has moved from Clinton with an 87.4 percent chance of winning on October 18th to a 64.5 percent chance on Saturday.

It’s great to build a complex model and load it with empirical data like polls, economic reports and presidential approval ratings. But if the output of that model is implausible, it’s time to go back to the drawing board.

As a financial analyst at an investment bank, or a research analyst at an economic consulting firm, your job would be in serious jeopardy if you produced 538’s model output without a clear explanation of how those fat tails that represent an inordinate number of close to impossible scenarios could actually occur. A model like that just isn’t client-ready. Time to re-think those assumptions!

―-

[4] I have to ask, why is anyone polling Idaho? But I digress. To find Idaho on the 538 updates, scroll to the bottom of the Update page and click “Show more updates” until October 27th appears. Note that the 538 model is run when new polls come in, which sometimes happens with multiple polls, and other times, like in this case with Idaho, happens with just one poll.

[5] http://fivethirtyeight.com/features/a-users-guide-to-fivethirtyeights-2016-general-election-forecast/

[6] http://projects.fivethirtyeight.com/2016-election-forecast/, captured Nov. 5th at 9:39am.

[7] This has to be estimated using the approximate area under the curve since 538 does not give the exact number of times each scenario occurs.

[8] http://election.princeton.edu/todays-electoral-vote-histogram/, from the Nov. 5th 9:06 AM model run.

[9] http://elections.huffingtonpost.com/2016/forecast/president, from the Nov. 6th, 3:10 PM model run.