The ability of competition to hone performance by human is know and celebrated—in the fields sports, the arts and business to name only a few. Competition between robots or agents is new only because robots are new. But predictably, competition or adversarial interaction is already proving to be a dramatic accelerator of advancement in artificial intelligence. Competition between robots lets them learn at a rate that is an order of magnitude faster than they could learn on their own. This has huge implications for machine learning itself—but also for the larger discussion of the coming singularity predicted by Ray Kurzweil when computers begin to propagate computers and the debate introduced by Elon Musk of just how wary humans ought to be about rapid advancements in ML and AI

The new field of adversarial learning, known as Generative Adversarial Networks (GAN) is only a few years old but has already emerged as an important new branch of ML and AI. Pioneered by a researcher at Google Brain, Ian Goodfellow and colleagues, it draws inspiration from a quote by the Nobel physicist, Richard Feynman “What I cannot create I cannot understand.” Accordingly, GAN focuses on using machines to create things rather than interpret things observed in the real world. And a good solution to Feynman’s challenge in the AI context turns out to be using two agents, working as friendly adversaries, to create order from chaos.

A simple demonstration of the new technique starts with one neural network or robot proposing a set of pixels as an image. A second agent then evaluates the image as real or not. Within a comparatively short amount of processing time, starting with essentially random data, the duo of robots is able to produce images that resemble real ones. What distinguishes real images? Real images show clear boundaries, areas of related colors and structure. Robots teamed together using the adversarial technique are able to generate images with these features without human supervision. Rather than merely classifying images—say letters as A or B—the realm of traditional machine learning and artificial intelligence, GAN agents playing this simple game are able to produce new images that appear to have been drawn from the real world.

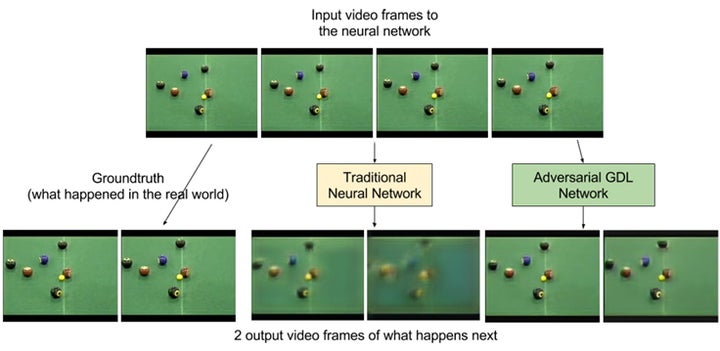

The method is already gaining traction in the real world. Researchers at Facebook are using the adversarial technique to predict video frames—what comes next in a video of a game of billiards, for example, or more broadly, to predict future events based on a model of the present. This is essentially how the human brain works. Using a model of the world, it predicts the likelihood of future events from a pen falling downward—to a passenger crossing an intersection—to getting accepted in the graduate school of one’s choice. The longer range goal at Facebook is to develop “common sense” to predict, for example, what content a user will like.

More broadly, however, the progress of adversarial learning to date shows that machine learning is at the cusp using combinations of agents or networks—each fulfilling specific tasks—to dramatically accelerate learning. Nature is replete with examples of the benefits of teamwork and specialization—from the hunting behavior of predators to the social organization observed human society. While many concerns about the rapid growth of AI have centered on the possible emergence of malicious agents—or malicious algorithms that do not have humanity’s best interest in mind, a greater concern may be the effects of two or more agents working in tandem on software and by extension human well being.

In any case, the ability of robots to learn from adversarial interaction, suggests that artificial intelligence and algorithms are coming at the world faster than previously anticipated.