If there is any feature on the smartphone that people of all ages use, it is the camera. One report even claims that 90% of people have only ever taken a picture using a mobile phone instead of a ‘real’ camera. While it is fair to be skeptical of statistics found on the internet, this one at least plays on a reality that we see all around us: the ubiquitous smartphone camera.

We text pictures, instagram pictures, facebook pictures, pinterest pictures, and snapchat everything, whether particularly interesting or not. The device that is capturing all of those images is the camera-equipped smartphone. But what do we really know about the cameras behind all of the images we see and share and how are they going to change in the coming years?

Eugene Panich is the CEO of Austin-based software company Almalence, a leading developer of the technology powering some of the world’s most-used smartphones. I asked him to share his perspective on what we do not know about the cameras we use and how they might be changing.

Q: How has the software in mobile phone cameras changed in recent years?

Eugene: Drastically. A few years ago software was considered as something additional/extra/optional and now it’s an integral, indispensable part of a camera subsystem. Sometimes the design of a new mobile phone model camera starts with looking at the software and then selecting the hardware.

Previously, the software was primarily used for post-processing, like adding some fancy filters to a photo that had already been taken. Now the image capturing process itself does not happen without software used to improve the image being taken. In other words, in the time between when you snap a picture and then see it in your photo album, it has been processed and enhanced digitally.

Q: Some phones take really good pictures and some do not. How much of the disparity is because of software and how much is hardware?

Eugene: When it comes to low end phones, the major difference comes from hardware. Such devices are usually too weak to run any good computational imaging.

In the mid-range segment some software is being used, but most of image quality comes from hardware. The phone maker has to decide how much to spend on the quality of the camera system for their target market, hardware comes first in the list, leaving less room for software in the cost structure.

At the high end the software and hardware cannot exist separately. Typically the hardware demands highly complex software to make it work. For example, if it is using a dual camera set up to capture more image data, the software must process that image before it is usable.

Q: Can software alone ever allow for totally lossless digital zoom?

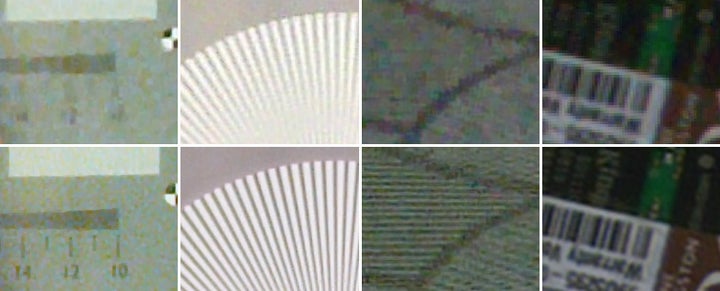

Eugene: Lossless zoom is the ability of the camera system to not lose resolution when zooming, or in the case of a smartphone, to restore the resolution lost due to cropping an image. While software increase of resolution may sound magical and unrealistic as creating something from nothing, the computational methods, especially those based on combining multiple frames into one, have perfect scientific basis. To get an idea of how multi-frame resolution works, think of focusing on an object for a while, and how you start to see more detail. Multi-frame resolution functions similarly.

And the answer is yes, and in fact there are almost no other options. Actually, the traditional hardware option, optical zoom, supposed to be lossless by default, comes out not so lossless, as small mobile lens resolution is fundamentally limited (by diffraction effects).

At the same time as the computational power of smartphones improves with new dedicated image processing units, processing time and power consumption cease to be limiting factors for resolution improvement through software.

Q: Where are mobile phone cameras headed in the next five years?

Eugene: Five years is quite a long period of time for such a competitive, user-focused, and mass-market industry as mobile cameras.

Remember that besides placing calls, texting, and accessing Internet, which every phone must do, the number one function of a mobile phone is imaging. Look at the competition in the industry, at how much attention is paid to imaging quality and features. It’s really not accurate to call these devices phones anymore. Now they are pocket sized cameras that are supposed to access the internet and send text messages.

Besides new trends in mass market models, such as utilizing a dual camera, the mobile phone makers are likely to test the absolutely crazy designs on niche models (so not flagship phones like the iPhone). Those tests that succeed will then be implemented more widely.

These are a handful of ideas that may be tested in the coming years:

- Array cameras, from many small cameras placed in a small array, to an array of big (normal mobile size) cameras occupying large part of phone.

- Mobile phones with four cameras placed at considerable distances (like in the corners) to achieve good 3D performance

- Pop-up and other mechanically transforming cameras implementing either optical zoom feature or accommodating large sensors

- Additional wide-angle, 180 or even 360 degree cameras

- Attachable camera modules (making the device bigger but allowing better imaging)