Any parent of a two-year old appreciates the power of speaking a common language. There is nothing more frustrating to my two-year old son than his inability to communicate what he wants. Learning to say things like "milk" and "applesauce" has transformed the breakfast experience. On the other hand, learning to say "trash" means that any object in reach is in danger of being tossed in the trash bin for the glory of saying "twash" over and over again.

I see this in my world as well. Millions of dollars are spent in the software world translating from one "language" to another. In fact, a whole industry has popped up around shared Application Program Interfaces (APIs) to standardize how systems communicate. Despite this trend, there seems to be more emphasis on the "communication" rather than the "data" itself. Data Analytics products in particular seem happy to shove all types of data into one mold, since the output is the same.

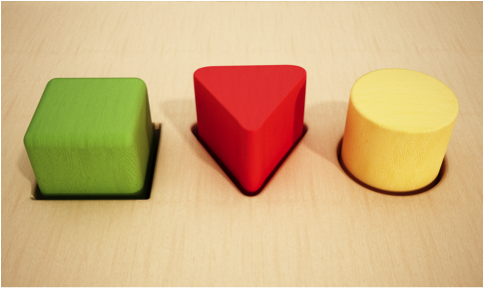

It is important now for data analytics vendors to take the road less travelled. Data needs to be treated "natively" - in other words, don't try to shove a square data peg in a round systems hole. Just like speaking a language natively changes the experience of travel - speaking the native language of data transforms the analytics experience. In particular, data analytics has to be bi-lingual - speaking the native language of both raw logs and time-series metrics. And here is why it matters - according to my Toddler.

Answer my Question - Quickly

Toddlers are well acquainted with the frustration of not being understood. Everything takes too long, and they need it now. And you know what? I get it. I have had the occasion of speaking to people that haven't spoken my language before, and it is hard. I once spent 15 minutes in a pharmacy in Geneva trying to order anti-bacterial cream. There was a lot of waving of hands and short, obvious words (Arm. Cut. Ow!).

The same obstacles are faced if you use a log system to store metrics. Every query needs to be translated from one language to another, and it takes forever. At end of the day, you can try optimize a log system - built to search for needles in haystacks - to perform the equivalent of speed reading, but eventually the laws of physics intervene. You can only make it so fast. It takes too long - and like my toddler, you will just stop asking the question. What's the use in that?

Cleaning up is Hard

I am always amazed with how my two-year old can turn a nice stack of puzzles or a bucket of toys into a room-sized disaster zone - it is the same components, but vastly different results. Storage optimization is essential in the world of operational data. There is a natural assumption underneath a true log-analytics system. We assume on some level that each log is a special snowflake.

There is, of course, a lot of repetition, but the key is to be flexible and optimize for finding key terms very quickly. Metrics, on the hand, are repetitive by design. Every record of a measurement is the same - except for the measurement itself. Once you know you are collecting something - say system CPU performance on some server - you don't need to capture that reference every time. You can optimize heavily for storing and retrieving long lists of numbers. Storing time series metrics as logs, or events, is extremely wasteful. You can incur any where from 3x to 10x more storage costs - and that is without the same performance. To achieve the same performance as most metrics system can reach, you are looking at 10-20x in storage costs. This, of course, is the reason why no log-analytics companies are really used for performance metrics at scale - the immense costs involved just don't justify the benefit of tool reduction.

I Want to Play with my Cars Anywhere

One of the funniest things my son does is how he plays with his toy cars. He has racetracks, roads, and other appropriate surfaces. He rarely uses them. He prefers to race his cars on tables, up walls, and on daddy's leg. The flexibility of having wheels is essential. He has other "cars" that don't roll - he doesn't really play with them. It is the same core truth with data analytics. Once you have high performance with cost effective storage - uses just present themselves. Now you can perform complex analytics without fear of slowing down the system to a crawl. You can compare performance over months and years, rather than minutes and hours - because storage is so much cheaper. Innovative use cases will always fill up the new space created by platform enhancements - just as restricted platforms will always restrict the use cases as well.

Choose Wisely

So, it's 2 AM. Your application is down. Your DevOps/Ops/Engineering team is trying to solve the problem. They can either be frustrated that they can't get their questions answered, or they can breeze through their operational data to get the answers they need. I know what two-year old would tell you to do. Time to put your old approach to data analytics in the twash.