When Hillary Clinton lost the presidential election in 2016, public trust in election polling ― which systematically overstated her standing in key states ― took a hit as well. Heading into the next election, many voters and pundits are still feeling apprehensive.

“I’m more skeptical of polls now because of who ... is in the White House,” Elthia Parker, a 79-year-old retiree, said at a Las Vegas rally late last month.

“Ignore the polls,” political commentator Ana Navarro exhorted, a sentiment that racked up more than 10,000 retweets. “We’ve all seen them be wrong before.”

Just 37 percent of registered voters said in a survey last year that they had even a good amount of faith in public opinion research. Republicans were most likely to be skeptical. And those responses were from people who were willing to be polled.

Most pollsters would agree with the skeptics that surveys are far from infallible. National polling, as pollsters are happy to remind anyone in earshot suggesting otherwise, wasn’t actually that far off in 2016. Surveys largely predicted a modest popular vote win for Clinton. But it was a historically bad year for polls in states like Wisconsin and Pennsylvania, which almost uniformly underestimated Donald Trump’s chances and left much of the public blindsided by his win.

“The number of times we’ve had to reassure a client that the process still works and the number of skeptical questions I get when giving talks has certainly increased since 2016,” Ashley Konig, the polling director at Rutgers University, noted in a recently published review of midterm polling.

Distrust, she said, has hampered pollsters’ job to provide an accurate depiction of public opinion. That and a slew of other challenges, from changing technology to rising costs, have driven pollsters to experiment with how they conduct surveys. Even more, distrust has ushered in a new focus on recalibrating public expectations about what horse-race polling can actually tell us ahead of an election.

“The 2016 surprise clearly stung a lot of us who work in the field,” said Scott Keeter, a senior adviser at Pew Research. “Part of it is that national polling did a good job, but few people remember that. But there were real problems with some of the state polling, and owing to the often limited resources state pollsters have to work with, there’s no guarantee that everything will go better this year or in 2020. Based on my conversations, some pollsters have made efforts to fix problems and a lot of creative work is being done. But off-year elections pose their own set of challenges, especially with respect to estimating likely turnout.”

What Went Wrong In 2016

Six months after Trump’s victory, a task force of pollsters released a report that acted in equal parts as a technical post-mortem and an existential defense of the industry.

“It is ... a mistake to observe errors in an election such as 2016 that featured late movement and a somewhat unusual turnout pattern, and conclude that all polls are broken.” the authors wrote. “Well-designed and rigorously executed surveys are still able to produce valuable, accurate information about the attitudes and experiences of the U.S. public.”

Their research found no evidence of a consistent bias toward one party in recent polling, they noted, nor that a cohort of “shy” Trump voters were deliberately hiding their choices from pollsters.

Instead, there was a perfect storm of more prosaic issues ― some potentially fixable, others less so, and some seemingly innate to the practice of polling itself. In the fixable camp: College graduates, who were more likely to support Hillary Clinton, were overrepresented in many polls.

Voters with higher levels of education have always been more likely to answer surveys, but in past elections, where education was less of a partisan fault line, this proved less of an issue. Since then, a number of university pollsters who didn’t already include education on the list of variables used to weight their results have begun doing so, although others have found the change to be less useful to their situations.

More intractable: State and district-level races often see relatively little polling, a problem that’s certainly persisted through 2018. At the moment, Real Clear Politics’ aggregation includes just three polls of the North Dakota Senate race taken since October. And although polls differed widely in how they were conducted, the report found little evidence that so-called “gold standard polls,” which use a traditional set of methodologies, were innately better-performing than the rest.

And then there’s the problem that’s plagued the industry since 1948, when pollsters like Gallup found Thomas Dewey winning tidily weeks before the presidential election ― and then declared themselves done for the season, meaning they entirely missed the late movement toward Harry Truman.

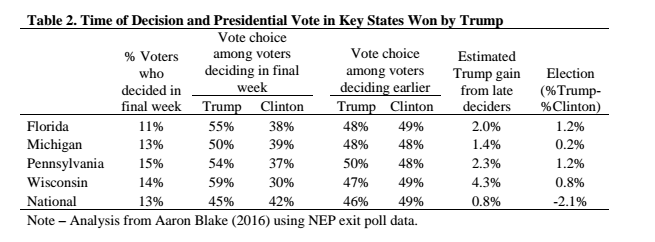

Most pollsters today wouldn’t dream of hanging up their hats in early October. But they remain entirely vulnerable to the prospect of missing a last-minute swing in the electorate. That was a main factor in the 2016 elections, the polling report concluded, based on a review of exit polls that found voters in states like Wisconsin who made up their minds late went for Trump by far greater margins than those who chose earlier. (Again, polling being an inexact science, not everyone agrees on the magnitude or existence of that shift.)

There’s nothing to say that 2018, or future elections, won’t once again feature a race that breaks late toward one side.

“I think the bottom line of what happened was that polling was just wrong enough nationally and at the state level that everyone got the wrong impression about what was going to happen,” SurveyMonkey’s Mark Blumenthal, a former polling editor at HuffPost, said last year. “Hopefully, if it happens again, we will all do a better job warning everyone that it’s possible.”

What’s Changed In 2018

Heading into the 2018 election, there’s been an effort to drive home that uncertainty by exposing the nuts and bolts behind every set of top-line numbers. Starting with 2017′s off-year elections, several outlets took the still-unusual step of releasing multiple estimates for how a race might look, depending on which kinds of voters turn out.

Figuring out who will turn out is always a challenge for pollsters, but particularly so in a year when Democrats are banking on supporters who are enthusiastic about this year’s elections but haven’t been reliable midterm votes in the past. Off-year elections in 2017 seemingly rewarded that gamble, prompting some pollsters to mull lowering the bar for including people without a strong voting history.

“We do not know what the population is until Election Day,” Monmouth pollster Patrick Murray, who has regularly released polls showing results for both high- and low-turnout scenarios, wrote earlier this year. “We are modeling a possibility.”

The online survey firms SurveyMonkey and Ipsos have also released surveys with multiple turnout models; outlets including CBS and The Washington Post have presented readers with multiple estimates based on their polling.

Perhaps the most extreme effort at transparency came from The New York Times’ Upshot, which partnered with Siena College to release dozens of polls as they were being conducted. Although reactions focused mostly on the queasy thrill of watching a candidate’s numbers grow or shrink in real time, the project also went to painful lengths to catalog the often-unspoken caveats that encumber every horse-race survey. Unanswered phone calls appear on district maps as a flood of empty circles, sampling error as a wide smear of color on a line graph of the results.

Their poll of the closely watched Texas Senate race, which gives Sen. Ted Cruz an 8-point lead, is peppered with provisos ― “It’s just one poll, though”; “Even after weighting, our poll does not have as many of some types of people as we would like”; “Even if we got turnout exactly right, the margin of error wouldn’t capture all of the error in a poll”; “Assumptions about who is going to vote may be particularly important in this race.”

Those turnout assumptions lend themselves to estimates ranging from a 15-point lead for Cruz if the electorate looks like 2014, to a 3-point edge for Democratic challenger Beto O’Rourke among only the people who say they’re almost certain to vote.

Pollsters are also experimenting with different ways of identifying and reaching voters. Most media and academic pollsters who conduct phone surveys have traditionally relied on a method called random digit dialing ― more or less what it sounds like ― to reach respondents. Many still do.

But voter files, once largely the domain of campaign pollsters, have seen increasing adoption, especially at the state level. Voter files are built off of publicly available records of who’s registered to vote and how often they do so, and supplemented by commercial vendors. Using them provides pollsters with unambiguous data on respondents’ voting history, and allows them to keep track of what kind of people aren’t responding to their polls.

There are potential drawbacks though ― although voter files do include information on people who aren’t registered to vote, that data can be spottier, carrying a risk of missing new voters.

The Upshot/Siena live polling made use of the voter file, and The Washington Post now uses a mix of the two methods for its state surveys. Also among those making the switch to a voter file this cycle was the venerable NBC/Wall Street Journal poll. Bill McInturff, the Republican half of the survey’s bipartisan team of pollsters, described the move as “the most important change we made after 2016,” noting that he and his Democratic counterpart have already seen the “powerful advantages” of using the method to handle political campaigns.

Another of the biggest changes in polling this cycle won’t be entirely felt until election night. For decades, the exit polls have been conducted through a consortium called the National Election Poll, consisting of The Associated Press and the major television networks, and relying heavily on in-person interviews at polling places across the country. This year, the AP and Fox News split from the consortium to launch an alternative called VoteCast, relying on interviews conducted just before the election ― an approach the AP says “reflects how Americans vote today: not only in person, but also increasingly early, absentee and by mail.”

The remaining members of the NEP and their pollster, Edison Research, have announced major changes of their own, including stationing pollsters at early voting centers and changing the way they ask about and incorporate data on voters’ education levels.

The results of the 2016 election, contrary to the most glib critiques, don’t mean that polling is broken. But they come at a time when the industry is already in flux. Response rates to surveys plummeted over the past three decades, as telemarketing-plagued Americans grew increasingly wary of unsolicited phone calls. That decline, which has since leveled off, wasn’t enough to derail polling ― people who still answer the phone, it turns out, are still largely similar enough to the ones who don’t, and election polling doesn’t seem to have grown any less accurate. But that shift, combined with the increasing ubiquity of cellphones, has made traditional polling more expensive than ever at a time when media sponsors and other clients are paring back. And it comes at a time when pollsters are facing both sky-high expectations for accuracy, and sky-high mistrust when they inevitably fail to meet them.

Polls, and the forecasting models built off them, can provide a snapshot of the most likely set of outcomes for Tuesday’s elections. And they do a far better job of it than yard signs, conversations with voters at small-town diners or pundits’ gut feelings. But as 2016 made achingly clear, they can never offer any guarantee that the unlikely won’t prevail.

Igor Bobic contributed reporting from Nevada.