Earlier this month, a 542-page report was released, concluding that top officials of the American Psychological Association, including its ethics director, contorted and altered the association's ethics policies so the psychologists on the Pentagon's payroll could use their expertise to refine and expand methods of torture. The new "ethics light" guidelines concluded that it was appropriate for psychologists to remain involved with "enhanced" interrogations, to make sure they remained "safe, legal, ethical and effective". Kind of like having physicians preside over lynchings to ensure they are done humanely. Groucho Marx once sneered, "Those are my principles. If you don't like them, well...I have others". He described the APA's position on its own "other" ethical principles.

A spokesperson for the APA fessed up: "The actions, policies and the lack of independence from government influence described in the Hoffman Report represented a failure to live up to our core values. We profoundly regret, and apologize for, the behavior and the consequences that ensued. Our members, our profession and our organization expected, and deserved, better."

How could you? I mean, how could we? How could influential members of the nation's largest association of psychologists make such a disastrous blunder? The first Principle of the Ethical Code of Psychologists is "Do no harm". Period, end of story, that's all she wrote. The principles that follow are just commentary. The profession of psychology is often paired with the word "calling": People are mostly drawn to it because they are fascinated with all things human and seek to relieve others' suffering and help better understand the problems we face as a human community.

As might be expected, the military psychologists first got involved to help, not harm. They were called upon to improve the training of personnel so they could better cope with and survive torture, if captured. How psychologists came to begin "reverse engineering" their scientific knowledge about surviving torture to enhance the practice of torturing others is astonishing. How to ensure that it never happens again is critical.

Torture began losing its cuddly image mid-2004. The images of abused prisoners at the Abu Ghraib prison created a public relations problem for the C.I.A.'s "enhanced interrogation program", that kinder, gentler way of referring to activities such as sleep deprivation, waterboarding, and "stress positions". In case the last decade of hearing these euphemisms has addled your mind, let me remind you: That stuff is torture. It is profoundly damaging and causes lifelong psychological trauma. We should not be doing it. Nobody should be doing it.

Have you no sense of decency, sir?

A senior APA official was consulted to help resolve this crisis. The APA invited a carefully selected group of psychologists and behavioral experts to discuss expanding psychologists' role in the interrogation program. A damning set of emails later revealed just how well-coordinated the APA's efforts were to lift the troublesome ethical guidelines that prevented psychologists from scientifically improving the state sanctioned practice of torture.

There are myriad ways that smart people make dumb mistakes. Maybe the success of books such as Sway, Predictably Irrational, Blink, etc., is because they promise, like the crowd-sourced GPS app "Waze," a way to avoid the snarls and pitfalls the uninformed masses are destined to suffer. But shouldn't psychologists know more about the blind spots of human reasoning? Time's up... the answer is "yes". Yet allegedly smart people, rising to positions of influence in the country's largest psychological organization, made some really bad decisions. Why? Some fundamental psychological findings, ones you may recall from your psych 101, might shed some light.

1.Cognitive dissonance -Stanford University psychologist Leon Festinger first used the term to describe the unpleasant experience of having two beliefs or attitudes that contradict each other. Doing so creates a feeling of absurdity - like wanting to live a long life and continuing to smoke. To reduce the distress, the smoker either quits or convinces himself that smoking is not that bad ("I'm cutting down, at least"). Perhaps the APA officials who stacked the ethical deck on the side of torture focused on the second principle of the Ethical Code of Psychologists the one that states: "Psychologists use their expertise in, and understanding of, human behavior to aid in the prevention of harm." Dick Cheney quelled any moral pangs thusly: "I have no problem as long as we achieve our objective. And our objective is to get the guys who did 9/11 and it is to avoid another attack against the United States." Dick Cheney is a wizard at resolving cognitive dissonance. Maybe the APA officials followed his example.

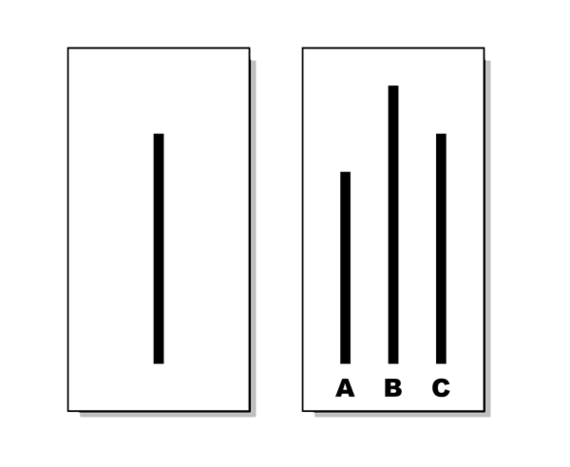

2.Conformity: Or "I am ok with being unique, I just don't want to be different". Pioneer social psychologist Solomon Asch demonstrated the power of the opinion of a group to induce conformity, even if that means denying one's own sensibilities. ("Yes, those lines are the same size," you say, even though they're obviously not). The psychologists involved were certainly aware of abundant evidence that torture causes extreme stress and pain that profoundly affects memory and executive functions. It's not an effective way to get at memories. Rather than triggering a simple pattern of brain activation that leads to the accurately stored memory, torture stimulates memories and associated images chaotically, without regard to their truthfulness. A person being tortured also quickly learns that, "when I'm talking, I'm not being water-boarded." A person being water-boarded will say anything, without even knowing if it's true or not. Although psychologists are perfectly aware of this, they may have accepted the judgment of a room full of intelligence officials who accepted as axiomatic that torture was a good way to get information. The psychologists conformed despite their knowledge and sensibilities.

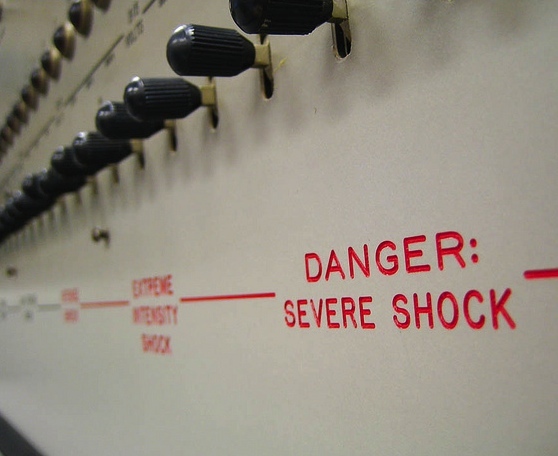

3.Obedience to Authority. The experiments are famous, and appalling. Yale psychologist Stanley Milgram found that ordinary people can be coerced to deliver what they believe are painful shocks to others in a "learning experiment". The results were published in 1963, when Adolf Eichmann, the banal, evil Holocaust bean-counter, was on trial. Milgram showed it was unlikely that only Germans follow orders. The symbol of "authority" in Milgram's research was a researcher in a lab coat. The "authority" in Abu Ghraib was the United States military. "This is a matter of national security" is often deployed to mean "you must comply". And many people do. But not everyone. In Milgram's studies, while some participants had compliance rates of close to 100 percent administering (fake) shocks to people who were "begging" them to stop, others were closer to zero. In the real world, some people vigorously resist. That a band of seasoned psychologists did not is particularly disturbing.

4.Groupthink in Camelot. The disastrous Bay of Pigs invasion (an early CIA effort conducted in our name) is usually the classic example of Army veteran and University of California psychologist Irving Janis' concept of "groupthink": faulty decisions made because group pressures lead to a deterioration of mental efficiency, reality testing and moral judgment. Janis held that groups so affected tend to ignore alternatives and take irrational actions that dehumanize other groups. The APA officials had to have some discourse about whether their involvement was right and just. Somehow, the voices for assisting torture prevailed.

5.Status Seeking: We get to be the cool kids. Prior to World War II, clinical psychologists mainly assessed people and were rarely given the opportunity to conduct psychotherapy, a professional activity that was almost exclusively carried out by psychiatrists. However, as veterans with severe trauma returned from the war, they quickly overwhelmed the nation's psychiatric resources and clinical psychologists stepped in. Psychologists' invitation to conduct psychotherapy was supposed to be temporary. But we were pretty good at it, often better than the psychiatrists. Soon it became a core part of clinical psychology's identity. Psychiatry didn't want to cede any more turf. However, the official psychiatric organizations were reluctant to throw their lot in with the purveyors of torture (as physicians, they have the Hippocratic oath in mind: "First, do no harm..."). Perhaps some APA members could not resist the chance to enhance their status and raise the heroic stock of the tribe of psychologists ("Gee-whiz, I'm a spook!").

Psychologists are human, and as humans, we're just as vulnerable to the seemingly endless opportunities for irrationality, self-deception, and mistakes as anyone else. Orthopedists occasionally break their own ankles and dentists get cavities. I have made plenty of mistakes in my own practice of therapy.

Psychotherapy, orthopedics, and dentistry can also create some pain to achieve helpful ends. But that is a world away from doing harm.

There are literally hundreds of empirical studies and well-thought-out concepts that explain why people do dumb things. But none of this excuses the despicable choices made by psychologists within the APA. They knowingly caused harm to other human beings. It's right there in the basic canon of psychological research: the warning flags to save us from ourselves. Their profound lapse of thoughtful consideration is beyond unethical. It is unconscionable.