The mystery surrounding Facebook's "Trending" topics has been obliterated. Those headlines you see when scrolling through your Facebook News Feed or searching for content on the mobile app are constructed by human beings and subject to their biases, according to a duo of incendiary reports written by Michael Nunez and published to Gizmodo.

"They call it 'Trending' news, and they advertise it as something that is sorted by an algorithm," Nunez told The Huffington Post in an interview Monday. "And that's just not the case."

Nunez's latest story, published Monday morning, alleges that some Facebook employees tasked with populating the Trending stories menu have avoided conservative news no matter how popular those stories were on the social network.

"In other words, Facebook’s news section operates like a traditional newsroom, reflecting the biases of its workers and the institutional imperatives of the corporation," Nunez describes in the piece, adding that there's no evidence that management forced workers to make decisions about what types of news to avoid. It was all up to the whims of whoever was working at the time.

A former Facebook news curator reached by HuffPost declined to comment due to a nondisclosure agreement mandated by the social network. And Facebook disputed the claims.

"There are rigorous guidelines in place for the review team to ensure consistency and neutrality," a Facebook spokeswoman told HuffPost. "These guidelines do not permit the suppression of political perspectives. Nor do they permit the prioritization of one viewpoint over another or one news outlet over another."

Facebook Vice President of Search Tom Stocky said that the company investigated the claims and "found no evidence that the anonymous allegations are true."

The Fine Print

If you're not familiar, Trending stories appear on the righthand side of your News Feed when you visit Facebook.com. (If you're a mobile app user, they appear on the bottom of the screen when you tap the search bar.) Conventional wisdom, notably relayed in this Recode article from last August, suggests that these "Trending" stories are merely the most popular ones being shared at any given moment.

"Facebook shows you things in your Trending line-up the same way it shows you things in your News Feed: Algorithms," Recode's Kurt Wagner wrote. "It takes into account a few personal things, like where you live and what Pages you follow. But primarily it looks for two broader signals: Topics that are being mentioned a lot and topics that receive a dramatic spike in mentions."

In Wagner's defense, that's still true to an extent. The Trending menu is motivated in part by algorithms that identify what people are talking about on Facebook. The team of "news curators" employed by the social network looks at those terms and then picks which ones are worth headlining and ultimately distributing.

“Facebook isn't just a social network. It's a media enterprise like CNN, The New York Times, HuffPost, Fox News and so on.”

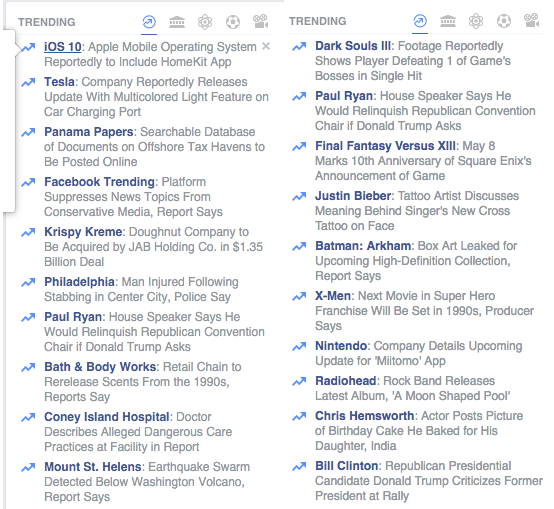

Algorithms apparently motivate which trending topics you see from a pool of possibilities. If you looked at your Trending menu and your friend's at the same time, you would probably find some major discrepancies:

Facebook's algorithms are smart. They know I'm interested in video games, and they know my colleague Emily is not; therefore, our Trending menus look different. The important thing to understand is that humans select and write those little blurbs to begin with, even if you see different stories than your friends.

The problem isn't with the algorithm -- it's that the human curators working for Facebook can also manually "inject" topics into the Trending module. In other words, they behave like news editors editing a front page. This technicality means Facebook isn't just a social network; it's a media enterprise like CNN, The New York Times, HuffPost, Fox News and so on.

Perhaps as a cheeky response to (or defense against) Gizmodo's stories, "Facebook Trending" became a top story in the module on Monday afternoon -- you can see it in the screenshots above.

Why This Is A Problem

News organizations are biased. Sometimes bias is obvious or proudly displayed. HuffPost, for example, publishes a disclaimer at the bottom of articles about Donald Trump explaining that he is a racist, lying xenophobe. Often, and perhaps most relevant to the issue with Facebook, "bias" simply comes down to one story getting published instead of another one.

That's one thing when you pick up a newspaper or visit a website with the sole purpose of reading a few articles and moving on to the next thing. It's altogether different when you're talking about an online ecosystem serving up content to an audience that's larger than China's population.

Facebook has morphed into a media goliath that shapes opinions not just according to computer algorithms but also according to a team of gatekeepers making choices about which information is distributed, apparently without many guidelines and standards -- two sets of things that any respectable news outlet adheres to.

Facebook CEO Mark Zuckerberg has stated, "We want to keep the Internet open." He claims to support net neutrality. But we've seen several times how Facebook is decidedly not neutral, especially not when it comes to independent media organizations. In this clear example, Nunez's Gizmodo articles explain that certain news agencies are actively suppressed by the company's "news curators."

But the bad blood runs deeper. Zuckerberg's social network has evolved in recent months into a very different platform than the one you originally signed up for. As it combats a decline in "personal sharing" -- photos of your family and emotional status updates, for example -- Facebook has aggressively invested in new products designed to keep you locked into its home base rather than wandering off to other apps or websites.

Because Facebook is so powerful, with a total userbase of 1.65 billion people, those tweaks have deflated traffic to news websites and panicked online publishers. The social network, likely the most important single portal into the Internet today, wants to be in control of every interaction on its platform; that "trending" stories are often injected by human staffers rather than an automated computer program is merely the latest example of this fact.

Facebook, as a portal to information, is by definition limiting -- not "open." It prioritizes certain sources and stories and limits access to others. Because Facebook is so massive, it would be impossible to expect perfection, but what about transparency? The company is a media monster now -- perhaps as a start its trending stories should be labeled "editor's picks" to help people understand what they're really dealing with. It's certainly not all the product of magical computer programs.

"It's okay that Facebook editorializes, and it's completely fine that they choose to focus on liberal news or mainstream media sites, but then they can't go and say they're using an algorithm to do this," Nunez said. "They have an editorial board, just like the New York Times or The Huffington Post or Gizmodo."